Tag: LLM inference

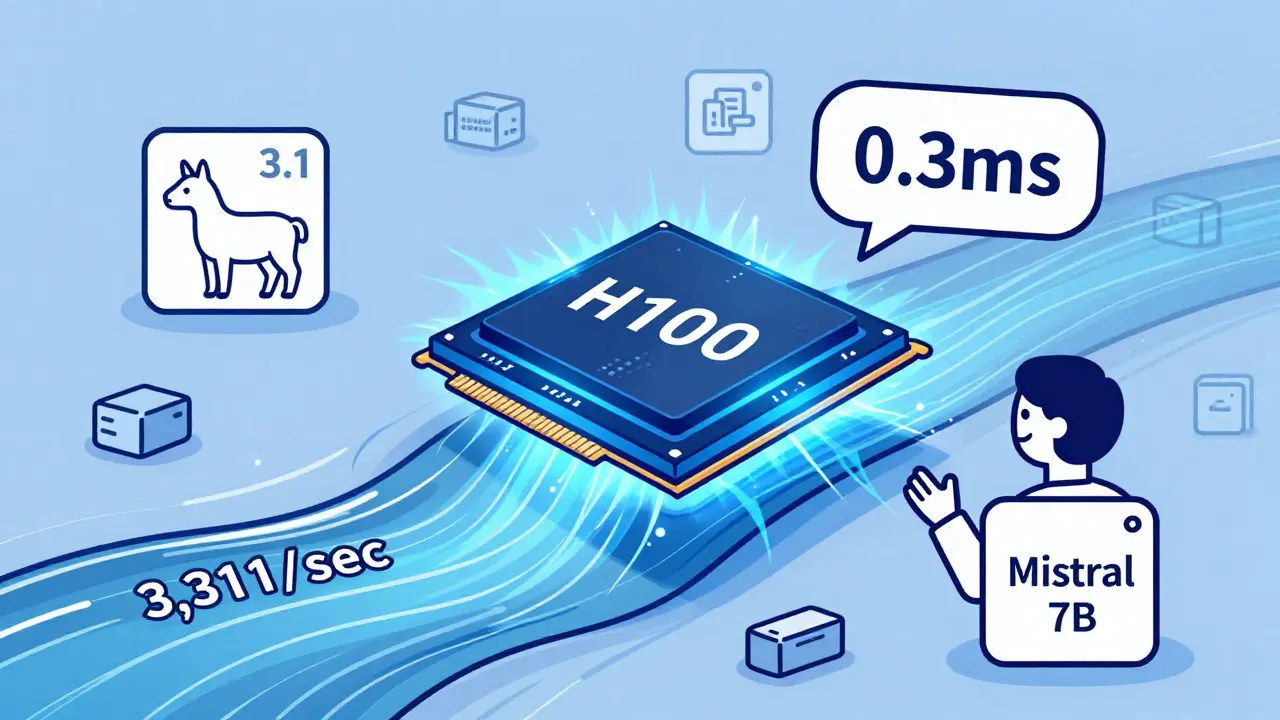

Learn how to choose between NVIDIA A100, H100, and CPU offloading for LLM inference in 2025. See real performance numbers, cost trade-offs, and which option actually works for production.

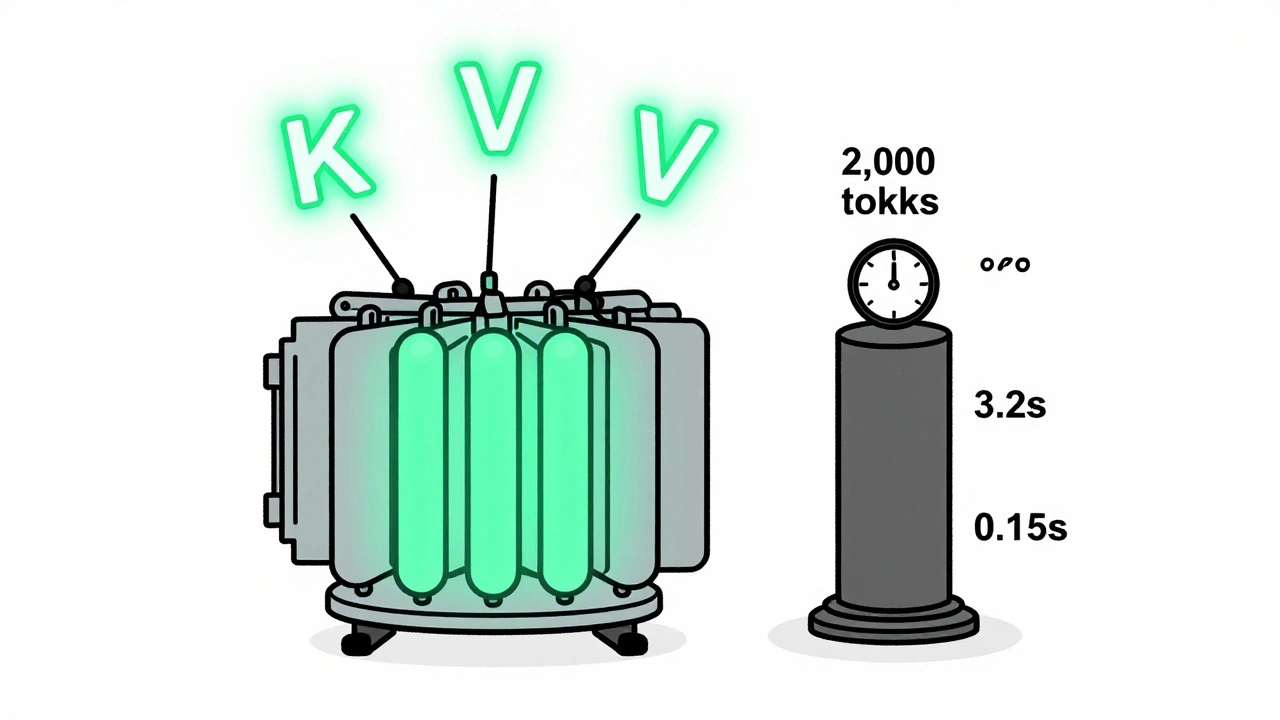

KV caching and continuous batching are essential for fast, affordable LLM serving. Learn how they reduce memory use, boost throughput, and enable real-world deployment on consumer hardware.

Categories

Archives

Recent-posts

Community and Ethics for Generative AI: How Transparency and Stakeholder Engagement Shape Responsible Use

Mar, 22 2026

Artificial Intelligence

Artificial Intelligence