Tag: long-context AI

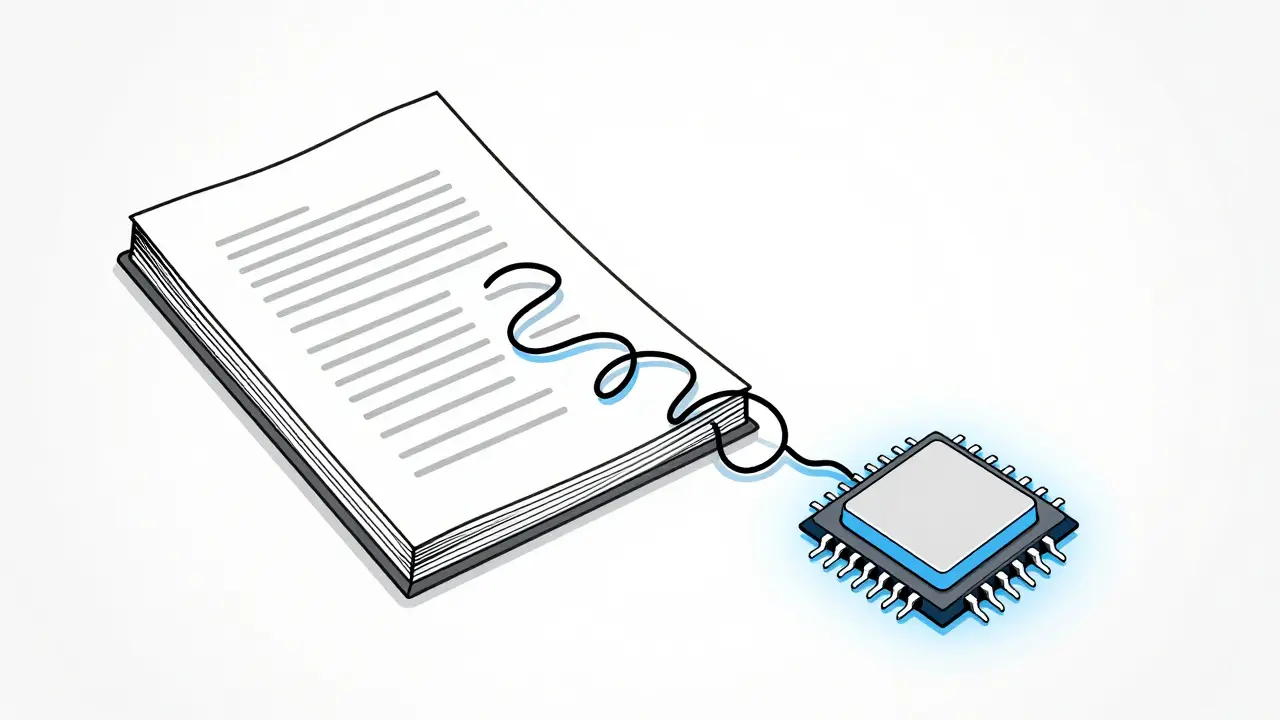

Discover how rotary embeddings, ALiBi, and memory mechanisms enable AI models to handle up to 1 million tokens. Learn key differences, real-world impacts, and future trends in long-context AI.

Categories

Archives

Recent-posts

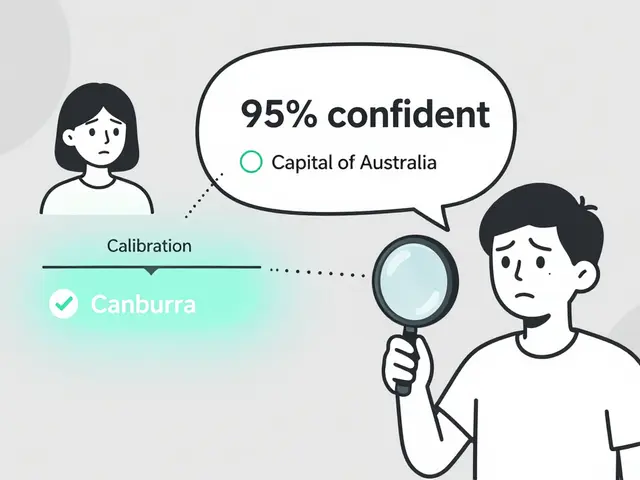

Token Probability Calibration in Large Language Models: How to Fix Overconfidence in AI Responses

Jan, 16 2026

Artificial Intelligence

Artificial Intelligence