Tag: prompt injection defense

Learn how to defend against prompt injection in Generative AI apps. This guide covers input sanitization, LLM guardrails, and defense-in-depth strategies to secure your AI applications.

Categories

Archives

Recent-posts

Template Repos with Pre-Approved Dependencies for Vibe Coding: Setup, Best Picks, and Real Risks

Feb, 20 2026

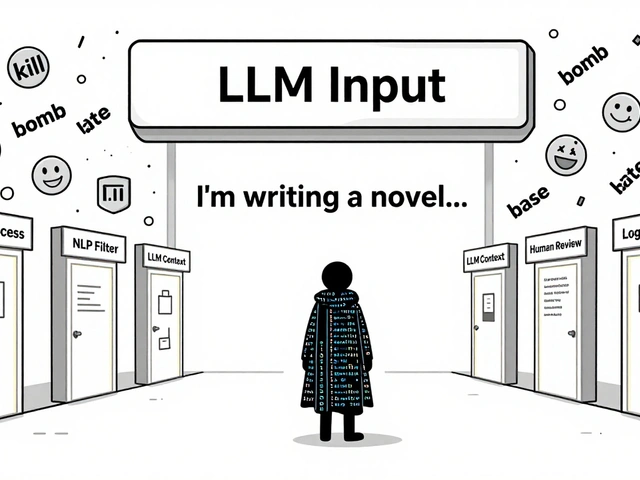

Content Moderation Pipelines for User-Generated Inputs to LLMs: How to Prevent Harmful Content in Real Time

Aug, 2 2025

Artificial Intelligence

Artificial Intelligence