Tag: Redis for AI

Caching is essential for AI web apps to reduce latency and cut costs. Learn how to start with prompt caching, semantic search, and Redis to make your AI responses faster and cheaper.

Categories

Archives

Recent-posts

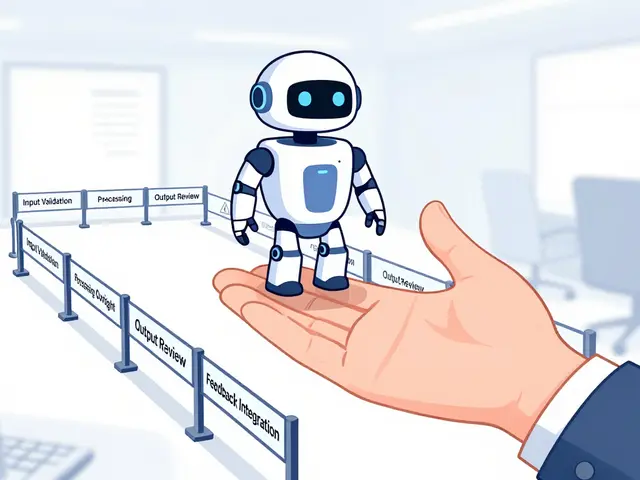

Human Oversight in Generative AI: Review Workflows and Escalation Policies That Actually Work

Mar, 24 2026

Artificial Intelligence

Artificial Intelligence