The real problem isn't that AI can't write code; it's that AI doesn't inherently know where one part of your business ends and another begins. According to an Instinctools survey from late 2025, about 67% of early vibe coding projects fail because they didn't establish clear domain boundaries. They start fast, but within six months, the system becomes a tangled mess. This is why integrating strategic DDD principles is no longer optional for anyone using AI to build enterprise systems.

The Secret Sauce: Bounded Contexts in the AI Era

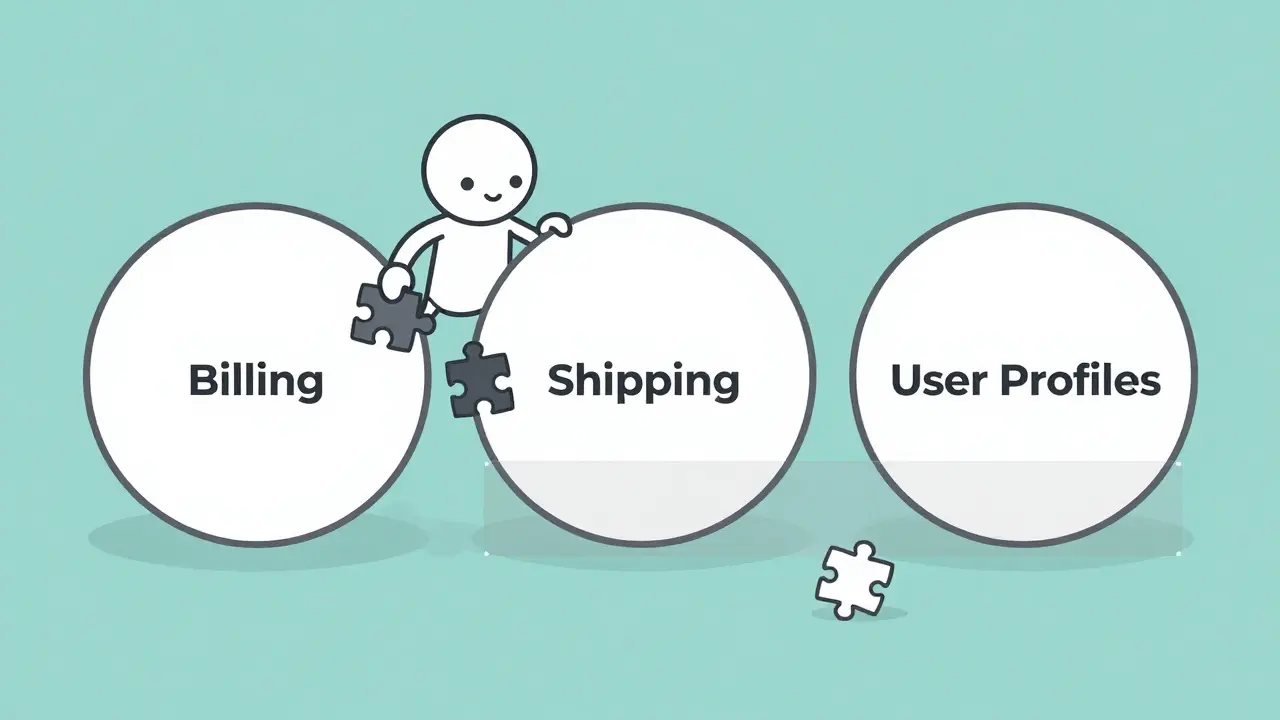

In traditional software, a Bounded Context is a boundary within which a particular domain model is defined and applicable. In vibe coding, this boundary acts as a guardrail for the AI. Without it, the AI will happily mix concepts from your "Payments" module into your "Shipping" module because, to a large language model, they both just look like "business logic."

To stop this bleed, you need to move from generic prompting to "context-rich" prompting. Instead of saying "Build me a checkout page," you define the bounded context first. You tell the AI: "We are currently working within the Checkout Context. In this space, a 'User' only refers to a registered buyer with a valid payment method. Do not use logic from the User Profile or Marketing contexts."

Successful teams are now using a specific four-part framework for these prompts:

- Workflow Explanation: Describe exactly how the feature should behave from start to finish.

- Tooling Constraints: Clarify which libraries or APIs are allowed to prevent the AI from hallucinating a random package.

- Development Approach: Explicitly state if the method is feature-driven or domain-driven.

- Hard Boundaries: Set strict limits, such as restricting the AI's access to security keys or production infrastructure.

The data shows this works. Google Cloud found that teams defining 3-5 bounded contexts before starting vibe coding saw 63% fewer model inconsistencies. It takes about 25% more planning time upfront, but it saves you from the "two-day untangle" that happens when your AI accidentally merges your loans and payments logic.

Building a Ubiquitous Language for AI Agents

One of the most powerful parts of DDD is Ubiquitous Language. This isn't just a glossary; it's a shared language used by developers, stakeholders, and-in the new paradigm-the AI. When the AI knows exactly what a "Claim" means in an insurance context versus a "Claim" in a legal context, the code it generates becomes exponentially more accurate.

In a vibe coding workflow, your ubiquitous language serves as the "anchor for shared context." You shouldn't just define these terms in a PDF that no one reads. Instead, keep a living document-like a context-definition.md file-that the AI is required to read and update after every single commit. If you change the definition of a "Premium Account," the AI should update the glossary before it updates the code.

Think of it as a contract. When a healthcare startup used this approach, they reported a 45% reduction in domain misunderstandings because non-technical clinical staff could help define the terms that the AI then implemented. It bridges the gap between the "vibes" of the business stakeholders and the cold logic of the code.

The Creation-Maintenance Divide

There is a dangerous trap in AI development that UX researchers call the "creation-maintenance divide." Vibe coding is magic for creation. You can prompt a working prototype into existence in two hours. But maintaining that code is a different story. Pure vibe coding, without DDD, often leads to 37% more technical debt by the three-month mark.

| Metric | Traditional DDD | Pure Vibe Coding | DDD + Vibe Coding |

|---|---|---|---|

| Domain Modeling Time | 6-8 Weeks | ~2 Hours | ~2.1 Days |

| Technical Debt Accumulation | Low | High (+37%) | Moderate (-52% vs Pure) |

| Integration Errors | Baseline | High | 40% Fewer than Pure |

| Primary Focus | Architectural Rigor | Speed of Execution | Balanced Scalability |

The hybrid approach allows you to model 87% faster than traditional methods while keeping the technical debt under control. You aren't spending weeks in meetings; you're spending a few days defining the "vibes" of your architecture and then letting the AI handle the heavy lifting of implementation.

Practical Workflow: From Prompt to Production

So, how do you actually do this on a Tuesday morning? You don't just start typing into Cursor or Copilot. You follow a structured rhythm that balances AI speed with architectural sanity.

- Domain Discovery: Start by mapping out your boundaries. Use simple visuals and a basic list of responsibilities for each context.

- The Glossary Phase: Define your ubiquitous language. What does a "Order" mean here? What is the difference between an "Order" and a "Shipment"?

- Context-Specific Generation: Use a dedicated prompt for each bounded context. Never ask the AI to "fix the whole app" in one prompt; ask it to "refine the logic within the Billing Context."

- The Vibe Loop: Describe intent $ ightarrow$ draft code $ ightarrow$ inspect $ ightarrow$ diagnose $ ightarrow$ refine prompt. Repeat until the tests are green.

- Continuous Context Engineering: This is the most important part. Every time the AI suggests a change that crosses a boundary, you must step in as the human architect and decide if that boundary needs to move or if the AI is just being lazy.

To avoid the common pitfall of "context drift," some of the most successful teams have started implementing automated validation scripts. These scripts check new code against the context-definition.md file to ensure the AI hasn't accidentally started importing entities from the wrong domain.

Avoiding the "Secret in Source" Trap

When you're vibing with an AI, it's tempting to just dump everything into the prompt to give it more context. However, this leads to a massive security risk: "secrets in source." AI generators often suggest hardcoding API keys or passwords just to get the code to run quickly. Google Cloud warns that this is a recipe for disaster.

The rule is simple: secrets must be loaded at runtime. When you are prompting your AI, explicitly tell it: "Do not include any hardcoded keys; use environment variables and a secret manager for all credentials." If you don't make this part of your ubiquitous language and your bounded context rules, the AI will likely leave a backdoor open in your production environment.

The Future of AI Architecture

We are moving toward a world where the AI is no longer just a autocomplete tool but a context-aware agent. GitHub and Google are already working on "DDD-aware prompting" that will automatically enforce language consistency across your project. But until those tools are perfect, the human developer's role has shifted. You are no longer just a coder; you are a context engineer.

Your value is no longer in knowing where the semicolon goes, but in knowing where the boundary between "Identity Management" and "User Preferences" should be. The speed of vibe coding is intoxicating, but the discipline of DDD is what keeps the project from collapsing under its own weight. If you can master both, you'll build systems that are both fast to create and easy to maintain.

What exactly is "vibe coding" in a professional context?

Vibe coding is a collaborative development style where developers use natural language prompts to describe their intent and allow AI agents to handle the implementation. Unlike traditional coding, the focus shifts from syntax and manual typing to high-level architectural guidance and iterative refinement through a "describe-generate-test" loop.

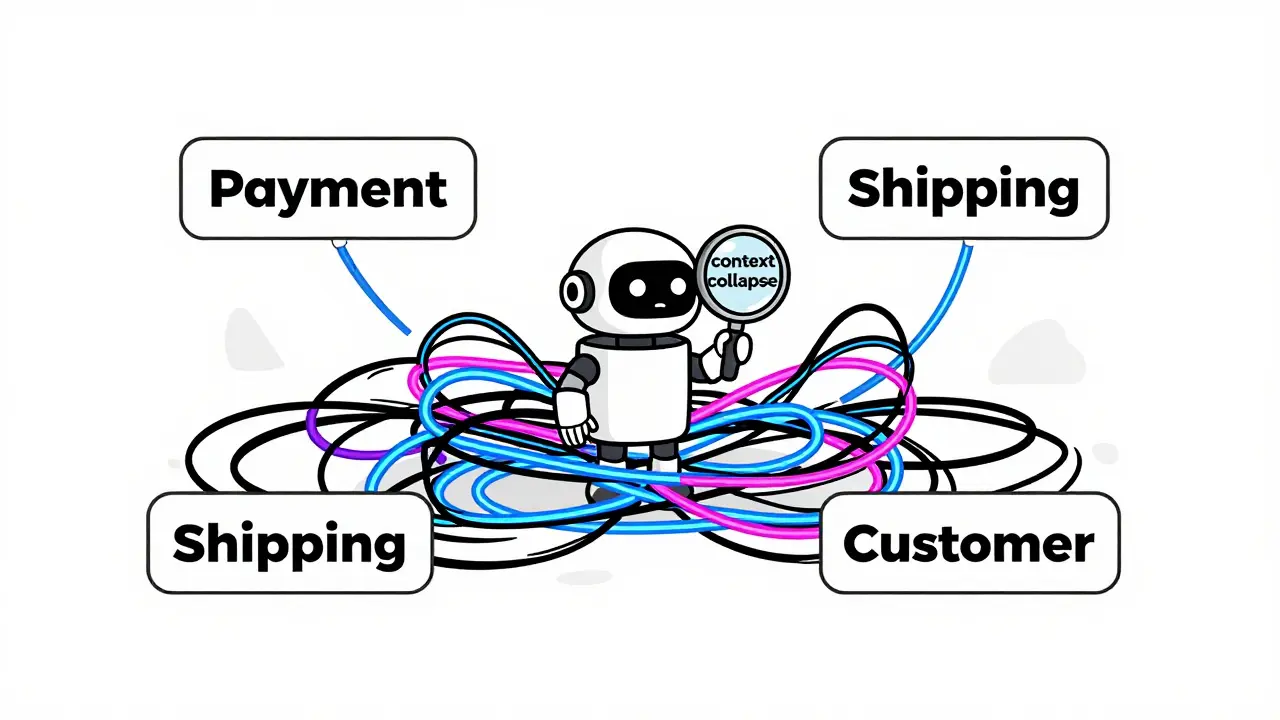

Why does vibe coding often lead to "context collapse"?

Context collapse happens when an AI model loses track of the boundaries between different business domains. Because the AI tries to find the most statistically likely next token, it may blend similar concepts from two different areas of a project (e.g., treating a "Customer" in a billing module the same as a "Customer" in a support module), leading to a monolithic, tangled codebase that is difficult to maintain.

How do I implement Ubiquitous Language with an AI?

The best way is to maintain a machine-readable glossary (like a Markdown file) within your repository. This file should define key terms, their meanings, and the context they belong to. You should instruct the AI to reference this file before generating code and to update the glossary whenever a term's definition evolves during the development process.

Is DDD with vibe coding slower than just using AI normally?

In the short term, yes. It requires about 25% more upfront planning to define bounded contexts and language. However, in the long term, it is significantly faster because it reduces integration errors by 40% and cuts technical debt accumulation by 52% compared to unstructured prompting.

Can non-technical people participate in this process?

Yes, and that is one of the biggest advantages. Since the primary interface is natural language, business stakeholders can help define the Ubiquitous Language and the rules for Bounded Contexts. This ensures the AI builds what the business actually needs, rather than what the developer thinks they need.

Artificial Intelligence

Artificial Intelligence

Patrick Tiernan

April 7, 2026 AT 10:01basically just rebranded prompt engineering and calling it a framework lol

Dmitriy Fedoseff

April 8, 2026 AT 22:41The absolute audacity of thinking a .md file is a substitute for actual architectural discipline is staggering. You cannot "vibe" your way into a scalable system. If you don't understand the underlying domain theory, you're just automating the creation of a dumpster fire at a higher velocity. Stop pretending that these AI wrappers are doing the thinking for you. It is an insult to software engineering to suggest that a few prompt guardrails can replace years of domain expertise. Get a grip on your fundamentals before you try to "engineer" a vibe.

Ashley Kuehnel

April 10, 2026 AT 07:35I've actually tried this with my team and it helps so much!! especially for the junior devs who struggle with where to put logic. the context-definition.md is a lifesaver though you really gotta make sure the AI actually reads it every time or it just ignores the rules and goes back to its own defaults. its a bit of a struggle to get the prompts right at first but the payof is huge for the whole team!

Tyler Springall

April 10, 2026 AT 10:09Imagine thinking a 45% reduction in misunderstandings is a victory when the entire premise is based on the whimsical "vibes" of a business stakeholder. It is truly pathetic that we have reached a point where "context engineering" is a title. This isn't architecture, it's glorified babysitting for a stochastic parrot. The sheer mediocrity of this approach is breathtaking.

Colby Havard

April 11, 2026 AT 20:40One must contemplate the ethical implications of delegating the structural integrity of our digital infrastructure to a probabilistic model... The pursuit of speed often blinds us to the erosion of craftsmanship... We are trading the soul of engineering for the convenience of a prompt... It is a tragedy of the modern age...

Liam Hesmondhalgh

April 13, 2026 AT 12:18The grammar in that table is an absolute joke. "Moderate (-52% vs Pure)" is not a proper sentence structure. Typical of these "industry reports" to be written by people who can't even handle basic syntax while they preach about "ubiquitous language". Absolute rubbish.

Amy P

April 13, 2026 AT 14:16This is absolutely wild!! I can't believe we're actually talking about "vibe coding" as a professional standard now! The idea that an AI could accidentally merge loans and payments logic is just a nightmare scenario! I'm honestly shaking thinking about how many companies are just winging it with these tools right now! We need to talk way more about the human side of this transition!

Patrick Bass

April 14, 2026 AT 02:07I agree with the point about secrets. It's a common mistake.