Imagine asking a travel agent for a "cheap vacation" and getting a brochure for a hostel in a city you hate. It’s frustrating because the agent didn’t ask where you wanted to go or what your budget actually was. Generative AI is a type of artificial intelligence that creates new content, such as text, images, or code, based on user input. Today, many users face this same frustration with AI. They type a vague request, and the AI guesses the rest. Often, those guesses are wrong.

This is where Interactive Clarification Prompts come into play. Instead of immediately generating an answer, the AI pauses to ask targeted questions. It wants to understand the context before it speaks. This simple shift-from guessing to asking-drastically reduces errors and improves the quality of the output. It turns a monologue into a conversation.

The Iceberg Problem in User Queries

Most people think their initial prompt tells the AI everything it needs to know. In reality, it rarely does. Research from the Nielsen Norman Group, a leading user experience research firm, suggests that user information needs operate like an iceberg. The visible prompt is just the tip. Beneath the surface lies a massive amount of unstated context: the intended audience, the desired tone, specific constraints, and the ultimate goal.

When you ask an AI to "write an email to my boss," you might be thinking of a casual update about a delayed project. The AI, lacking that submerged context, might generate a formal resignation letter or a stiff quarterly report. This mismatch happens because the AI tries to fill in the blanks with statistical probabilities rather than actual intent. Interactive clarification prompts force the AI to dive beneath the surface. By asking, "Is this email urgent?" or "What is your relationship with your boss?", the system uncovers the hidden layers of your request.

This approach addresses a fundamental flaw in traditional prompt-based interactions. Users often don’t realize how vague their requests are until they see the poor results. By proactively seeking clarity, the AI prevents the interaction from starting on the wrong foot. It ensures that the foundation of the response is built on accurate user intent, not algorithmic guesswork.

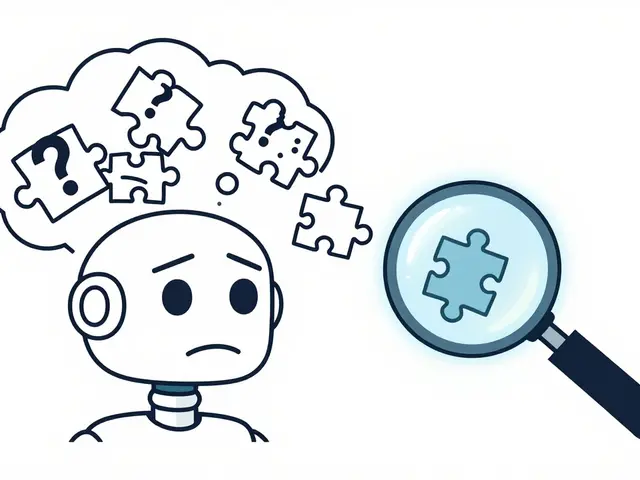

Reducing Hallucination Risk Through Precision

One of the biggest risks in using generative AI is Hallucination, which refers to instances where an AI model generates false, misleading, or fabricated information presented as fact. Hallucinations often occur when the AI is forced to make up details to satisfy a vague or contradictory prompt. If you ask for a summary of a non-existent study without providing enough context, the AI might invent one to please you.

Interactive clarification acts as a safeguard against this. By asking specific questions about sources, facts, and scope, the AI can narrow down its search space. For example, if you ask for medical advice, a clarifying prompt might ask, "Are you looking for general health information or specific treatment options for a diagnosed condition?" This distinction changes everything. It signals to the AI whether it should provide general wellness tips or strictly factual data from authoritative medical databases.

This precision lowers the probability of the model drifting into fabrication. When the input is highly specified, the statistical predictions made by the language model align more closely with reality. The AI isn’t guessing; it’s calculating based on clear boundaries. This makes the output safer, more reliable, and less prone to the confident-but-wrong answers that plague unguided AI systems.

From Command to Collaboration: The Perplexity Example

You don’t have to build your own AI to see this in action. Perplexity AI, a search engine powered by large language models, has integrated interactive clarification directly into its Copilot feature. When you enter a complex query, Perplexity doesn’t just dump a list of links. It engages in a dialogue. It asks follow-up questions to refine your search parameters.

This design philosophy acknowledges a hard truth: most users arrive at AI systems with incomplete specifications. They don’t know what they don’t know. Perplexity’s approach scaffolds the user toward a better query. It transforms the interaction from a unidirectional command-response model into a collaborative session. The AI becomes an active participant, helping you shape the question so the answer is useful.

This method saves time. Without clarification, you might spend ten minutes refining a prompt after receiving a bad result. With clarification, the AI guides you through the necessary refinements upfront. You get a high-quality result faster because the groundwork was laid during the questioning phase. It’s a smarter use of both human and computational resources.

Framework Integration: CLEAR, PROBE, and PROMPT

Interactive clarification isn’t just a standalone trick; it fits neatly into established prompt engineering frameworks. Take the CLEAR Framework, which stands for Concise, Logical, Explicit, Adaptive, and Reflective. The "Adaptive" component emphasizes refining prompts based on response quality. Interactive clarification takes this further by adapting the *input* before the output is even generated.

Similarly, the PROBE Framework includes a step to "Request Reasons". This encourages users to ask the AI to explain its thinking. Interactive clarification flips this dynamic. The AI requests reasons from the user. It asks, "Why do you need this data?" or "What problem are you trying to solve?" This ensures the AI understands the purpose behind the request, not just the literal words.

The PROMPT Framework focuses on role assignment and explicit purpose definition. Without these, AI outputs diverge from user intentions. Interactive prompts enforce these elements. By asking, "Who is the target audience?" the AI ensures the role and purpose are defined. This structured approach leads to consistent, high-quality results across different types of queries.

Technical Mechanics: Specifying Input for Better Output

To understand why this works, you need to look under the hood. Generative AI operates as a predictive algorithm. It calculates the next word in a sequence based on statistical coherence with previous inputs. The more precise the input, the more accurate the prediction. Vague inputs lead to broad, generic, and potentially incorrect predictions.

Interactive clarification maximizes input specification. It ensures that the core components of a good prompt-request, framing context, and format-are all present and correct. If any of these are missing, the AI asks for them. This systematic uncovering of implicit information aligns the model’s statistical predictions with your actual intent. It’s not magic; it’s math. Better data in means better data out.

This process also respects the limits of the model. Large language models have vast knowledge but limited reasoning capabilities regarding unstated preferences. By explicitly stating your constraints through clarification questions, you guide the model’s attention mechanisms. You tell it exactly where to focus its computational power. This results in responses that feel tailored and intelligent, rather than generic and robotic.

Educating Users Through Dialogue

There’s a hidden benefit to interactive clarification: it teaches users how to use AI better. Novice users often struggle with prompting. They don’t know what details matter. When an AI asks, "Should this reference academic sources or industry publications?", it highlights a critical variable. The user learns that source type affects output quality.

This creates a feedback loop. Over time, users internalize these questions. They start adding context to their initial prompts voluntarily. They learn that specifying audience, length, and tone yields better results. Interactive clarification systems act as mentors, incrementally improving user understanding of effective AI interaction. This democratizes advanced AI capabilities, making them accessible to people without technical expertise.

This educational aspect is crucial for widespread adoption. As AI becomes embedded in daily workflows, users need to develop fluency in communicating with machines. Interactive prompts provide a safe environment to practice this communication. Mistakes are caught early, and corrections are guided. This reduces frustration and builds confidence in the technology.

Practical Implementation: What Questions Should AI Ask?

If you’re designing or using AI systems with interactive clarification, here are the key areas to probe:

- Scope and Focus: "What specific aspect of this topic matters most to you?" This prevents the AI from covering too much ground superficially.

- Audience: "Who will read or use this output?" This determines the tone, complexity, and jargon level.

- Format and Length: "Do you need a bullet-point list, a paragraph, or a full essay?" This ensures the structure matches the need.

- Constraints: "Are there any words, topics, or perspectives you want to avoid?" This helps the AI navigate sensitive or restricted areas.

- Source Authority: "Should this rely on peer-reviewed studies, news articles, or expert opinions?" This calibrates the credibility of the information.

These questions aren’t just bureaucratic hurdles. They are strategic tools that shape the final product. By answering them, you give the AI a blueprint. The result is a response that feels custom-made, not mass-produced.

Conclusion: The Future of Human-AI Interaction

Interactive clarification prompts represent a significant evolution in how we interact with generative AI. They move us away from static, one-off commands toward dynamic, adaptive dialogues. This shift reduces hallucination risk, improves accuracy, and educates users along the way. It treats the specification problem as the core design challenge, ensuring that AI outputs genuinely address user needs.

As AI systems become more sophisticated, the ability to ask the right questions will become just as important as the ability to generate answers. We are moving toward a future where AI doesn’t just respond-it collaborates. And that collaboration starts with a simple, powerful question: "Can you tell me more?"

What is an interactive clarification prompt?

An interactive clarification prompt is a technique where an AI system asks targeted questions to users before generating a response. This helps the AI understand context, constraints, and intent, leading to more accurate and relevant outputs.

How do interactive clarification prompts reduce hallucination risk?

By forcing the user to specify details like sources, scope, and facts, the AI narrows its search space. This reduces the likelihood of the model fabricating information to fill in gaps, as it relies on precise, user-provided constraints rather than statistical guesses.

Which AI platforms currently use interactive clarification?

Perplexity AI is a notable example, integrating this feature into its Copilot tool. Other advanced AI assistants may also employ similar strategies, especially in enterprise or specialized applications requiring high precision.

Why is the Nielsen Norman Group’s research relevant here?

Their research highlights the "iceberg" nature of user queries, showing that most critical context is unstated. This validates the need for AI systems to actively uncover hidden requirements through clarification rather than assuming intent.

Does interactive clarification slow down the AI response?

It adds a brief initial step, but it saves time overall. By preventing multiple rounds of refinement due to poor initial results, users get a usable output faster. It optimizes the total interaction time, not just the first response latency.

Artificial Intelligence

Artificial Intelligence