We've all seen it happen: a Large Language Model is an AI system trained on massive datasets to generate human-like text give you a response that looks perfect. The grammar is flawless, the tone is confident, and the logic seems to flow. Then you realize it just invented a historical fact or hallucinated a piece of code that doesn't exist. This happens because models often prioritize sounding coherent over being factually correct. To fix this, researchers are moving away from trusting the model's internal "gut feeling" and instead using external verifiers to ground the reasoning process in reality.

The Problem with Internal Reasoning

Most LLMs use a technique called Chain-of-Thought (CoT) is a prompting method that encourages models to decompose complex problems into intermediate reasoning steps . While CoT makes models smarter, it doesn't actually stop them from lying. A model can take five logical-sounding steps and still arrive at a wrong answer because step two was based on a false premise. The model doesn't have a "truth sensor" to tell it when it has drifted off course.

External verifiers act as that missing sensor. Instead of letting the model guess, these systems force the AI to check its work against a trusted source-like a database, a formal logic engine, or an actual image-before it moves to the next step. This shift from "self-correction" to "external verification" is where the real gains in reliability are happening.

Grounding in Logic and Knowledge

One way to stop the drift is by turning vague language into strict logic. Take the FOLK is a First-Order-Logic-Guided Knowledge-Grounded framework used for explainable claim verification framework. Instead of asking an LLM "Is this claim true?", FOLK translates the claim into First-Order Logic (FOL) clauses. It breaks a big claim into smaller, bite-sized predicates that can be checked individually against external knowledge.

Think of it like a lawyer building a case. They don't just say "the defendant is guilty"; they list specific facts, prove each one with evidence, and then use those proven facts to reach a conclusion. By grounding each step in a knowledge base, the model can't just glide over a factual error with a confident tone.

Solving Visual Hallucinations

Reasoning gets even messier when images are involved. Vision-Language Models (VLMs) often suffer from "superficial inspection," where they glance at an image and assume things that aren't there. To fight this, the CoRGI is a Chain of Reasoning with Grounded Insights framework designed to reduce hallucinations in multimodal AI framework implements post-hoc verification.

CoRGI doesn't just trust the first answer. It breaks the reasoning chain into individual statements and asks: "Is there actually visual evidence for this specific part?" It uses a Visual Evidence Verification Module (VEVM) to locate specific Regions of Interest (RoIs) in an image. If the model says "the cat is on the mat" but the VEVM can't find a mat in the image, the claim is flagged and corrected. This approach has shown massive wins; for example, using the LLaVA-1.6 backbone, it boosted accuracy on the VCR benchmark by 12.9 points.

| Framework | Primary Verifier | Best Use Case | Key Strength |

|---|---|---|---|

| FOLK | First-Order Logic | Claim Verification | High explainability |

| CoRGI | Visual Evidence (VEVM) | Multimodal Reasoning | Reduces image hallucinations |

| GRiD | Dependency Graphs | Complex Logical Tasks | Internal consistency |

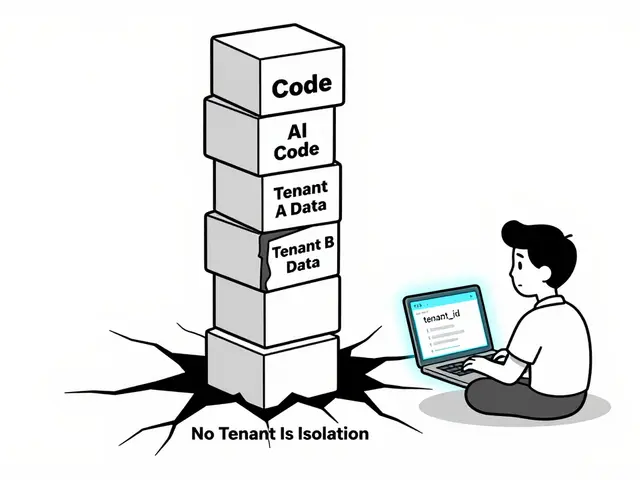

Dependency-Aware Reasoning

Sometimes the problem isn't a single wrong fact, but a breakdown in the sequence of logic. The GRiD is a Grounded Reasoning in Dependency framework that treats reasoning as a graph of interconnected nodes framework treats reasoning as a graph rather than a list. It creates a map of dependencies: step C cannot be true unless steps A and B are verified first.

By enforcing these logical dependencies, GRiD prevents the model from making "lucky guesses" that are internally inconsistent. It's a lightweight module that works during inference, meaning you don't have to spend millions of dollars retraining the model to make it more logical. It has proven highly effective on benchmarks like StrategyQA and TruthfulQA, where the nuance of the relationship between facts is everything.

The Role of Model Size and Expert Knowledge

You might wonder if small models can do this too. Small Language Models (SLMs) are compact AI models designed to run on limited hardware while maintaining specific reasoning capabilities definitely struggle more with self-correction than their giant counterparts. However, research shows that if you pair an SLM with a strong external verifier (like GPT-4 or an oracle label), the small model can actually self-correct its math and commonsense reasoning effectively. The secret isn't just a bigger brain; it's a better teacher/verifier.

We're also seeing a rise in psychologically-grounded reasoning. This is where AI is paired with human causal graphs. In tasks like troubleshooting a machine, the AI doesn't just predict the next word; it checks its suggested action against a human-designed mental model of how the machine actually works. If the AI suggests a step that is causally impossible, the external human-expert model blocks it. This is often modeled as a POMDP is a Partially Observable Markov Decision Process used to model decision-making under uncertainty to handle the uncertainty of the environment.

Why Self-Critique Isn't Enough

Some people suggest just telling the model to "think again" or "critique your own answer." This is the basis of the LLM-Modulo approach, but there's a catch: models are generally terrible at self-reflection if they don't already know the answer. If a model doesn't know the capital of Kazakhstan, it can't "critique" its way into finding the right answer. It will just hallucinate a more confident wrong answer.

This is why external, domain-specific verifiers are non-negotiable for high-stakes work. Whether it's a formal symbolic language for math or a visual checker for medicine, the verifier must exist outside the generative process of the LLM to be effective.

What exactly is "grounding" in the context of LLMs?

Grounding is the process of linking the abstract tokens generated by an AI to real-world data or formal rules. Instead of the model relying on probability to predict the next word, grounding forces the model to verify its claims against an external source of truth, such as a knowledge base, a set of logical axioms, or visual evidence from an image.

Can external verifiers work in real-time during a chat?

Yes. Frameworks like GRiD are designed as lightweight modules that operate during inference (the time when the model is generating a response). While this adds a small amount of latency, it significantly reduces the chance of the model providing a confidently wrong answer, making the interaction more reliable for the end user.

Why is CoRGI better for vision models than standard prompting?

Standard prompting often leads to "hallucinated" descriptions where the model describes things it doesn't actually see. CoRGI uses a Visual Evidence Verification Module to physically locate the object in the image. If the object isn't there, the reasoning step is rejected, forcing the model to correct its path based on actual pixels rather than linguistic patterns.

Do I need to retrain my model to use these verifiers?

Not necessarily. Many of the best grounding frameworks are "post-hoc," meaning they act as a filter or a check after the model has generated a draft of its reasoning. This allows you to add a layer of reliability to existing models like LLaVA or Qwen without the massive cost of fine-tuning or retraining.

Which is more effective: self-verification or external verification?

External verification is consistently more effective. LLMs struggle with a "blind spot" where they cannot recognize their own errors because the error is built into their internal probability map. An external verifier provides an independent perspective that doesn't share the model's biases or gaps in knowledge.

Next Steps for Implementation

If you're building a system that requires high accuracy, start by identifying where your model fails most. If it's hallucinating facts, look into FOLK-style logic grounding. If it's misinterpreting images, a post-hoc visual verifier like CoRGI is your best bet. For complex multi-step problems, implementing a dependency graph will ensure that your model doesn't skip critical logical steps. The goal is to move from a system that "sounds right" to one that can prove it is right.

Artificial Intelligence

Artificial Intelligence