Tag: External Verifiers

Explore how external verifiers stop LLM hallucinations through frameworks like FOLK, CoRGI, and GRiD to ensure AI reasoning is factually grounded.

Categories

Archives

Recent-posts

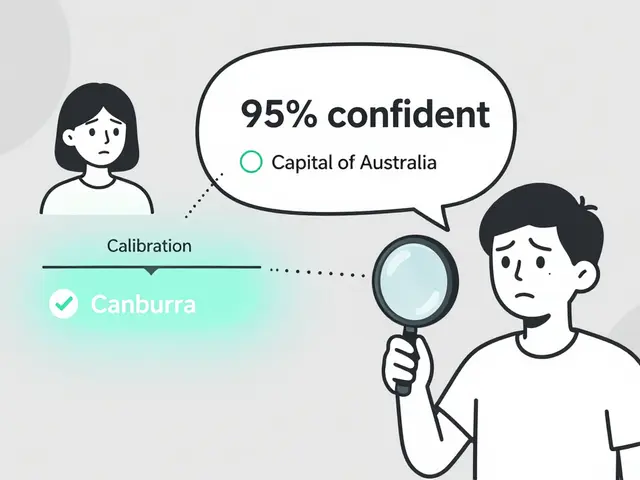

Token Probability Calibration in Large Language Models: How to Fix Overconfidence in AI Responses

Jan, 16 2026

Artificial Intelligence

Artificial Intelligence