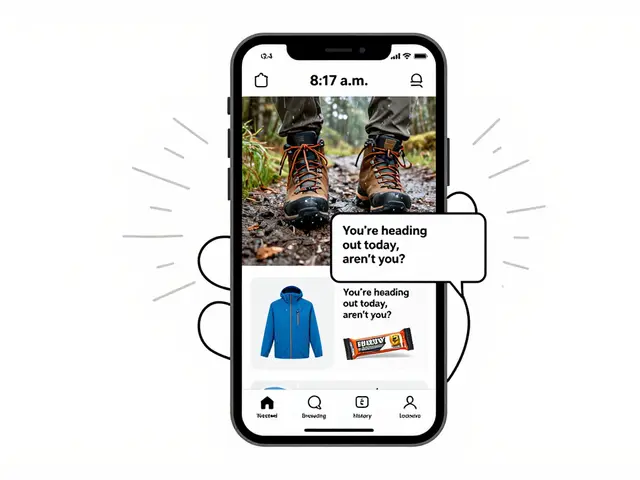

Imagine building an entire app in an afternoon because you're "vibing" with an AI agent. You're moving fast, the code is flowing, and the prototype looks great. But here's the scary part: while you were focused on the features, did the AI accidentally leave your database open to the public? Did it hallucinate a login check that doesn't actually block anyone? This is the reality of vibe coding-rapid development using AI assistants without the traditional safety nets of a professional DevSecOps pipeline.

When we skip the formal gatekeeping, we create a "governance gap." In a traditional setup, a senior dev or a security tool would catch a missing authorization check. In a vibe coding session, you're often trusting the AI's logic implicitly. The problem is that AI agents are notorious for partial implementations. They might write the code to check if a user is logged in, but forget to check if that user actually has permission to delete a specific record. This is where access control and repository scope become the difference between a successful launch and a catastrophic data breach.

The Authentication Gap in AI-Generated Code

You can't have access control if you don't know who the user is. In the world of vibe coding, there is a dangerous tendency to let the AI handle authentication logic. However, Authentication is the process of verifying the identity of a user or service . When an AI writes this, it often creates "happy path" code-it works if everything goes right, but fails miserably when someone tries to bypass it.

A common mistake is letting the AI generate a simple middleware check that can be easily skipped by a clever request to a backend endpoint. To fix this, stop relying on the AI for the primary shield. Instead, implement authentication at the infrastructure level. For example, using NGINX is a high-performance reverse proxy and web server used to handle requests before they hit the application . By putting a reverse proxy in front of your vibe-coded app, you ensure that a non-authenticated request never even triggers a single line of your AI-generated code. It's like having a bouncer at the door instead of hoping the guests follow the house rules once they're inside.

Implementing Robust Access Control

Once you know who the user is, you need to decide what they can do. This is where Role-Based Access Control (or RBAC) comes in. RBAC is a method of restricting system access to authorized users based on their assigned roles within an organization . If you're prompting an AI to build a dashboard, don't just ask for "a way to manage users." Be explicit. Ask it to generate code that strictly separates "Admin," "Editor," and "Viewer" roles.

Even with a good prompt, you have to watch out for Broken Object-Level Authorization (BOLA). This happens when a user can access another user's data just by changing an ID in a URL (like changing /user/123 to /user/124). AI agents frequently miss this detail because they focus on the visual functionality rather than the underlying security logic. You must manually verify that every single endpoint checks not just if the user is an "Admin," but if they actually own the piece of data they are trying to modify.

| Feature | Traditional DevSecOps | Vibe Coding Approach | Recommended Fix |

|---|---|---|---|

| Auth Logic | Peer-reviewed modules | AI-generated snippets | Move to Reverse Proxy (e.g., NGINX) |

| Permissions | Strict RBAC matrices | Implicit/Partial logic | Explicit Role Prompting + Manual Audit |

| Validation | CI/CD Automated Tests | Manual "Vibe Check" | Runtime Authorization Testing |

Solving the Repository Scope Dilemma

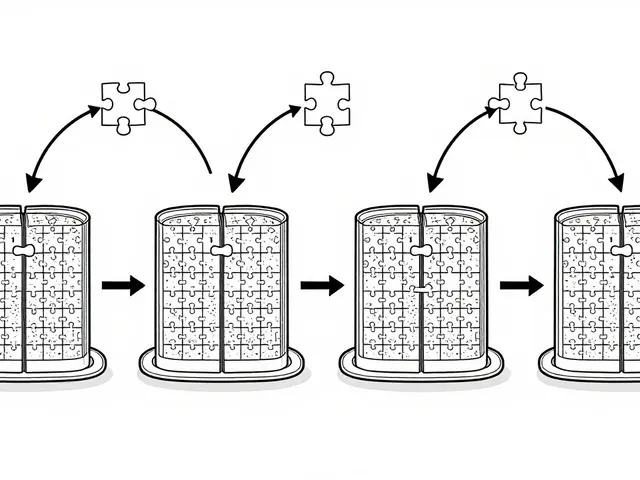

In a professional setting, code lives in a centralized repository with clear visibility. In vibe coding, things often end up in "a random folder on my desktop" or a loose collection of local files. This lack of Repository Scope is the definition of which files, folders, and environments an AI agent or developer has permission to read and write to . When the AI doesn't have a clear boundary, it might accidentally index sensitive configuration files or push secrets to a public branch.

To regain control, you need to move from "gatekeeping" to "enablement." Instead of trying to stop the AI from accessing things, give it a map. Use .coderules files or a well-structured README in your repository. These act as a set of instructions that the AI reads before it writes a single line of code. By telling the AI, "Never touch the /secrets folder" or "Always use the AuthService for permission checks," you integrate your security policy directly into the AI's context.

Data Privacy and the Danger of "Secret Leakage"

Privacy isn't just about who can log in; it's about where the data goes. AI agents often suggest convenient but insecure ways to handle data. For instance, an AI might suggest hardcoding an API key directly into a variable to "get things moving." This is a disaster waiting to happen. You need a strict Secrets Management system a strategy for securely storing and managing sensitive credentials like API keys, passwords, and certificates . Always use environment variables and a dedicated vault, and explicitly tell your AI agent to never generate hardcoded keys.

Then there's the issue of

CORS is

Cross-Origin Resource Sharing, a security mechanism that allows a server to indicate any origins that are allowed to share resources

. AI agents love to use the wildcard * in CORS settings because it makes the app "just work" without errors. But a wildcard means any website on the internet can make requests to your backend. Always double-check the CORS settings generated by your tools and restrict them to your specific trusted domains.

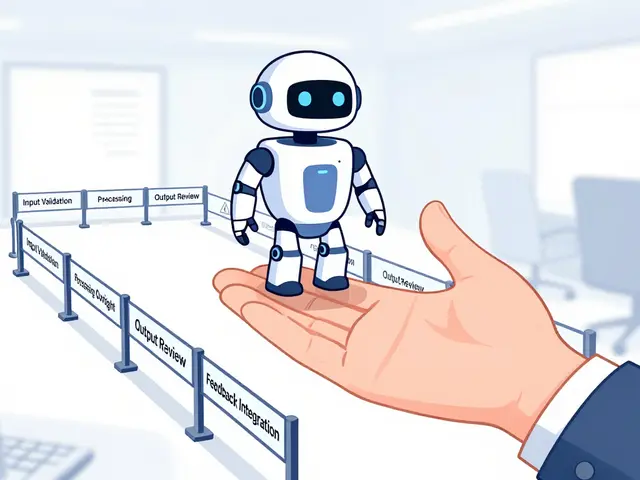

Securing the AI Agents Themselves

We often talk about the code the AI writes, but what about the AI itself? Tools like

Claude Code or GitHub Copilot often operate within

GitHub Actions,

an automation platform that allows software workflows to be run directly in a GitHub repository

. This means the AI agent has a GITHUB_TOKEN, giving it the power to create branches, push commits, and install dependencies autonomously.

This creates a massive supply chain risk. If an AI agent is tricked into installing a malicious package, it can exfiltrate your entire source code or steal your credentials. Since you can't always see what processes an AI agent spawns at runtime, you need Egress Policy Enforcement. This means blocking unauthorized outbound traffic at the network layer. If the AI tries to send your data to an unknown IP address in another country, the network should shut it down regardless of what the AI "thinks" is correct.

The Vibe Coder's Security Checklist

If you're building rapidly with AI, don't let the speed blind you to the risks. Use this checklist to ensure your project doesn't become a case study in how to get hacked:

- Authentication First: Is there a reverse proxy or a mandatory auth layer before the AI-generated logic starts?

- Role Verification: Did you check if a regular user can access the

/adminendpoint just by guessing the URL? - BOLA Test: Can you access someone else's data by changing an ID in the request?

- Secrets Audit: Search your entire repository for strings like

API_KEYorpasswordto ensure nothing is hardcoded. - CORS Lockdown: Replace any

*in your CORS configuration with your actual domain names. - Egress Control: If using AI agents in CI/CD, are outbound connections restricted to known, trusted endpoints?

What is the biggest security risk with vibe coding?

The biggest risk is the "governance gap." Because vibe coding bypasses traditional code reviews and security pipelines, AI-generated hallucinations-like missing authorization checks or insecure default settings-go unnoticed until they are exploited in production.

How can I stop AI from hardcoding secrets in my app?

The most effective way is to provide the AI with a .coderules file or a system prompt that explicitly forbids hardcoding credentials. Combine this with a tool like GitLeaks or TruffleHog to scan your commits for any accidentally leaked secrets.

Does using a reverse proxy really replace AI-generated auth?

It doesn't replace it entirely, but it adds a critical layer of defense. By handling authentication in a tool like NGINX, you ensure that no request even reaches your AI-generated application code unless it has been verified, eliminating the risk of an AI "forgetting" to add an auth check to a new endpoint.

What is BOLA and why does it happen in AI code?

BOLA (Broken Object-Level Authorization) happens when an app checks if a user is logged in, but doesn't check if they own the specific object they are requesting. AI agents often overlook this because they focus on the general function (e.g., "get user profile") rather than the specific permission (e.g., "get ONLY MY profile").

How do I limit the scope of an AI coding agent?

Limit the agent's access by using a specific repository structure and providing a clear .coderules file. Furthermore, if the agent is running in a CI/CD environment like GitHub Actions, implement strict network egress policies to prevent the agent from sending data to unauthorized external servers.

Artificial Intelligence

Artificial Intelligence