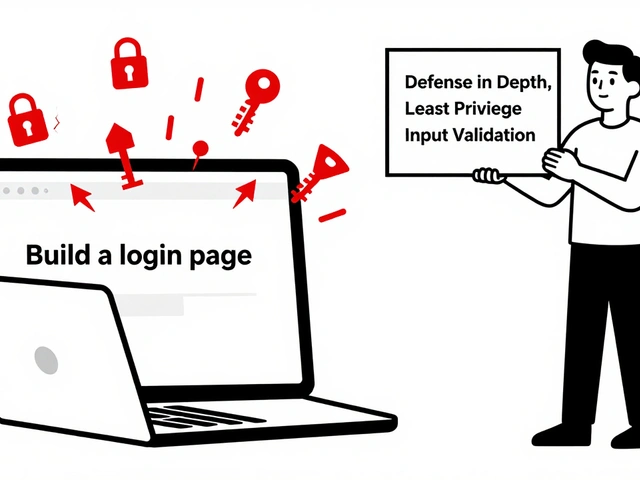

Tag: agent security

Sandboxing external actions in LLM agents prevents dangerous tool access by isolating processes. Firecracker, gVisor, and Nix offer different trade-offs between security and performance. Learn which method fits your use case.

Categories

Archives

Recent-posts

Calibration and Outlier Handling in Quantized LLMs: How to Keep Accuracy When Compressing Models

Jul, 6 2025

Artificial Intelligence

Artificial Intelligence