Tag: AI in coding

Domain-specialized LLMs like CodeLlama, Med-PaLM 2, and MathGLM outperform general AI in coding, medicine, and math. Learn how they work, their real-world accuracy, costs, and why they're replacing generic models in professional settings.

Categories

Archives

Recent-posts

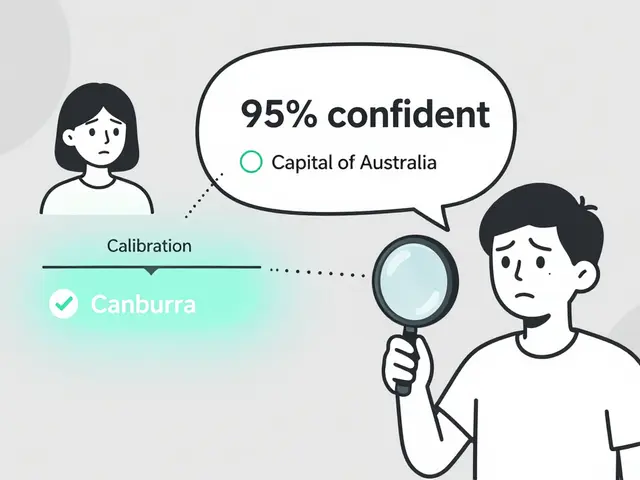

Token Probability Calibration in Large Language Models: How to Fix Overconfidence in AI Responses

Jan, 16 2026

Template Repos with Pre-Approved Dependencies for Vibe Coding: Setup, Best Picks, and Real Risks

Feb, 20 2026

Artificial Intelligence

Artificial Intelligence