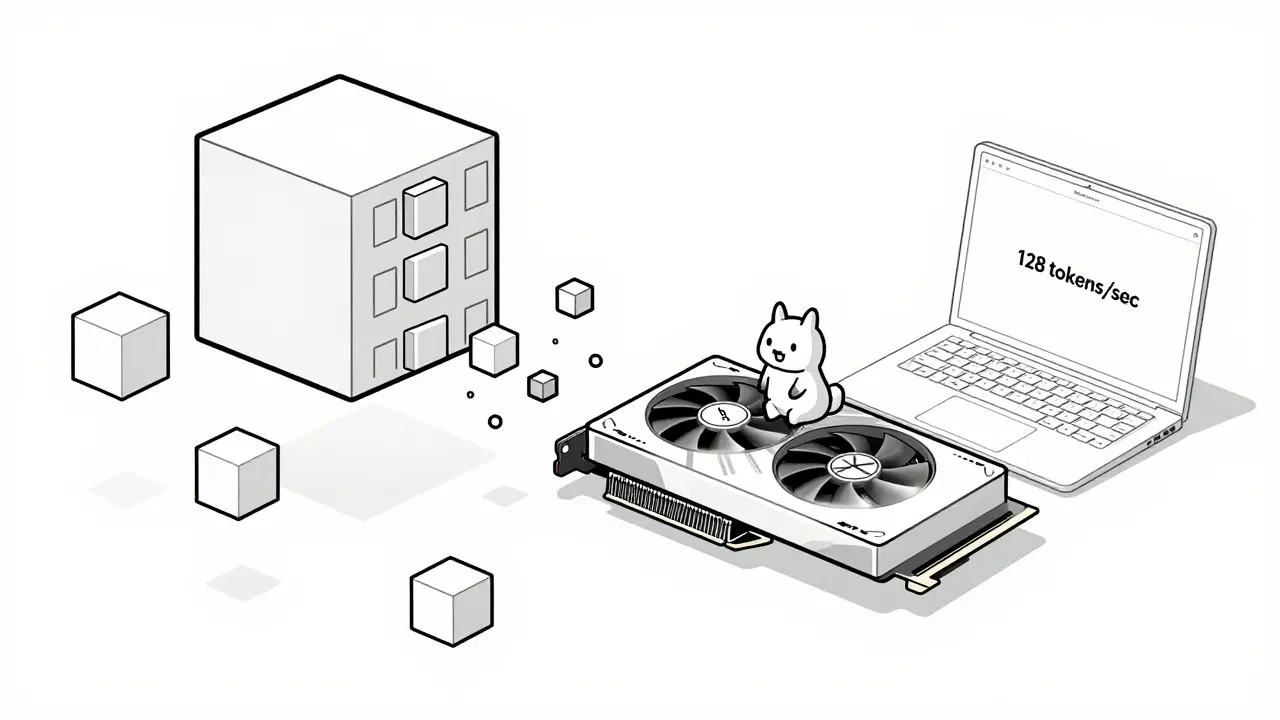

Tag: CPU inference

Learn how hardware-friendly LLM compression lets you run powerful AI models on consumer GPUs and CPUs. Discover quantization, sparsity, and real-world performance gains without needing a data center.

Categories

Archives

Recent-posts

Human Oversight in Generative AI: Review Workflows and Escalation Policies That Actually Work

Mar, 24 2026

Artificial Intelligence

Artificial Intelligence