Tag: gpt-oss-120b

Learn how to scale open-source LLMs in 2026. Explore hardware needs for gpt-oss-120b, the role of SLMs, and professional serving stacks using vLLM and SGLang.

Categories

Archives

Recent-posts

Retrieval-Augmented Generation for Generative AI: Grounding Outputs in Verified Sources

Mar, 28 2026

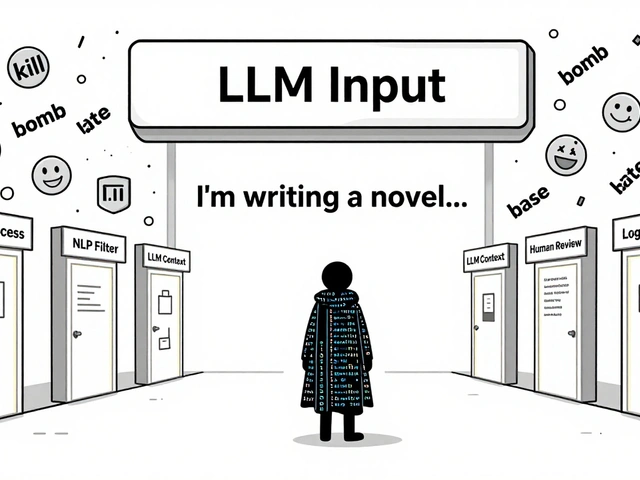

Content Moderation Pipelines for User-Generated Inputs to LLMs: How to Prevent Harmful Content in Real Time

Aug, 2 2025

Human-in-the-Loop Operations for Generative AI: Review, Approval, and Exceptions Strategy Guide

Mar, 26 2026

Artificial Intelligence

Artificial Intelligence