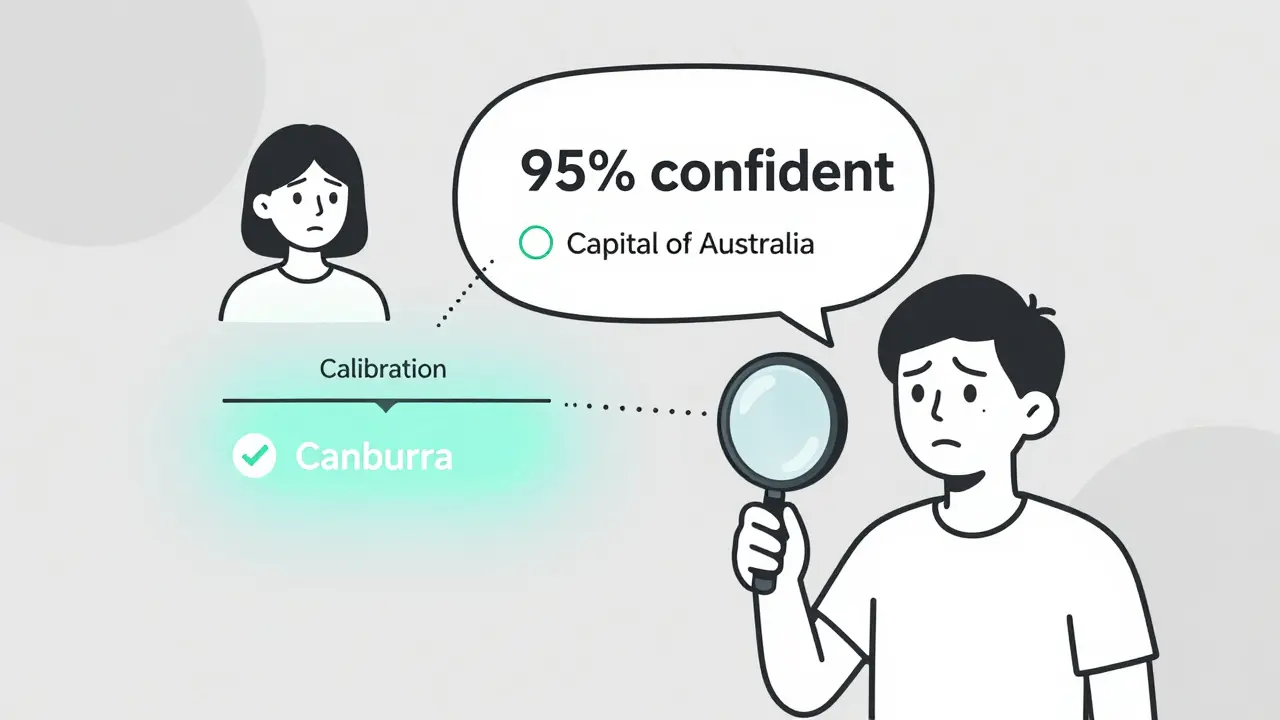

Tag: language model calibration

Most LLMs are overconfident in their answers. Token probability calibration fixes this by aligning confidence scores with real accuracy. Learn how it works, which models are best, and how to apply it.

Categories

Archives

Recent-posts

Generative AI for Software Development: How AI Coding Assistants Boost Productivity in 2025

Dec, 19 2025

Artificial Intelligence

Artificial Intelligence