Tag: LLM optimization

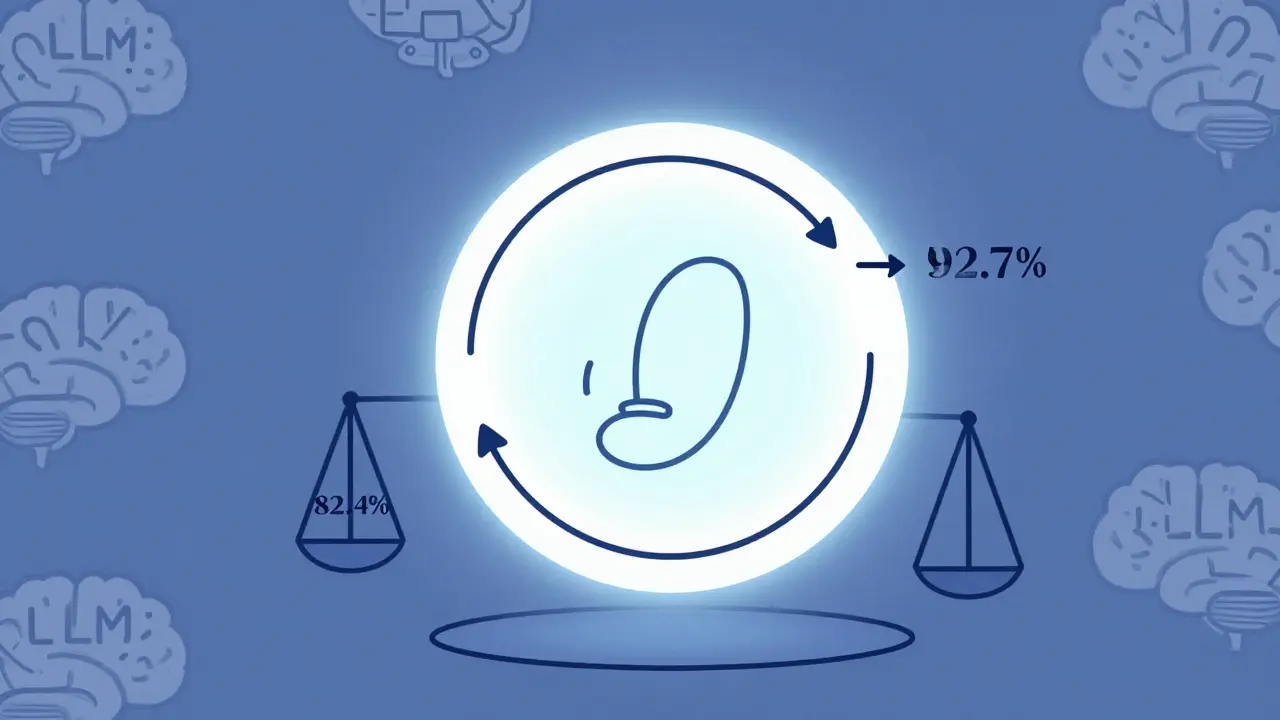

Reinforcement Learning from Prompts (RLfP) automates prompt optimization using feedback loops, boosting LLM accuracy by up to 10% on key benchmarks. Learn how PRewrite and PRL work, their real-world gains, hidden costs, and who should use them.

Categories

Archives

Recent-posts

Key Components of Large Language Models: Embeddings, Attention, and Feedforward Networks Explained

Sep, 1 2025

How to Optimize Your Contact Center with Generative AI: Summaries, Sentiment, and Routing

Apr, 19 2026

Artificial Intelligence

Artificial Intelligence