Tag: NVIDIA drivers

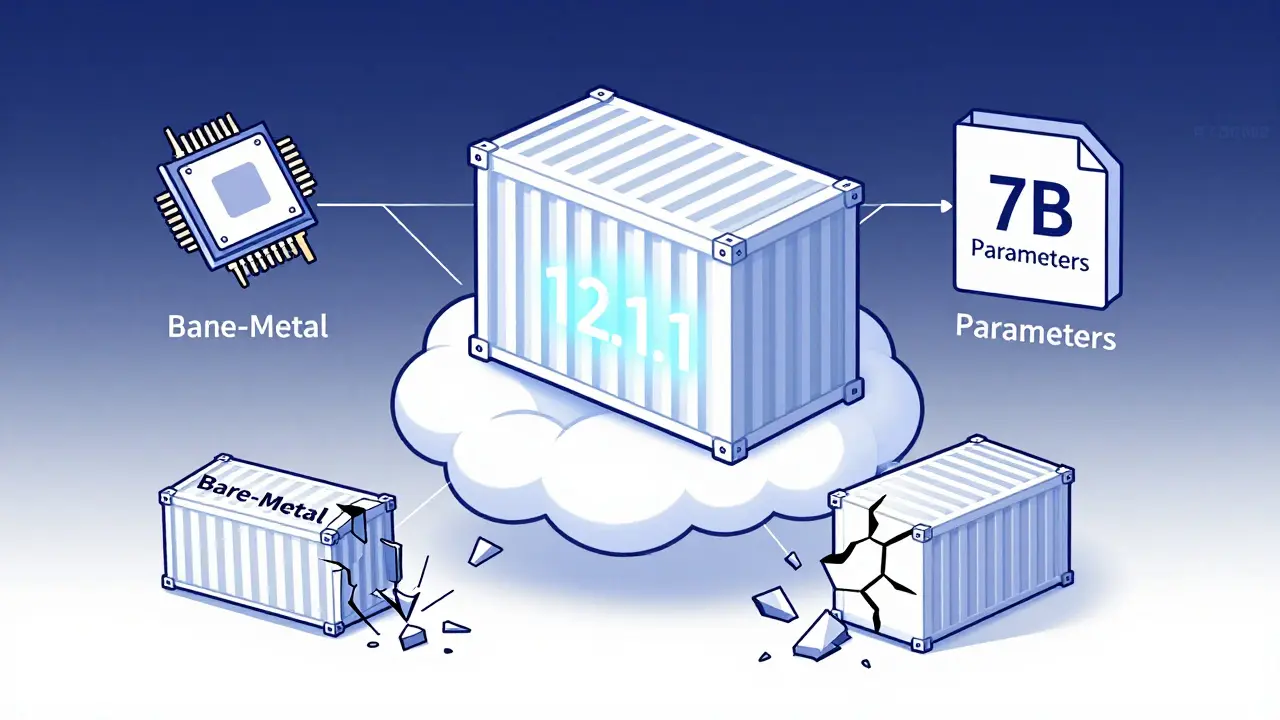

Containerizing large language models requires precise CUDA version matching, optimized Docker images, and secure model formats like .safetensors. Learn how to reduce startup time, shrink image size, and avoid the most common deployment failures.

Categories

Archives

Recent-posts

Pretraining Objectives in Generative AI: Masked Modeling, Next-Token Prediction, and Denoising

Mar, 8 2026

Artificial Intelligence

Artificial Intelligence