Tag: parameter-efficient fine-tuning

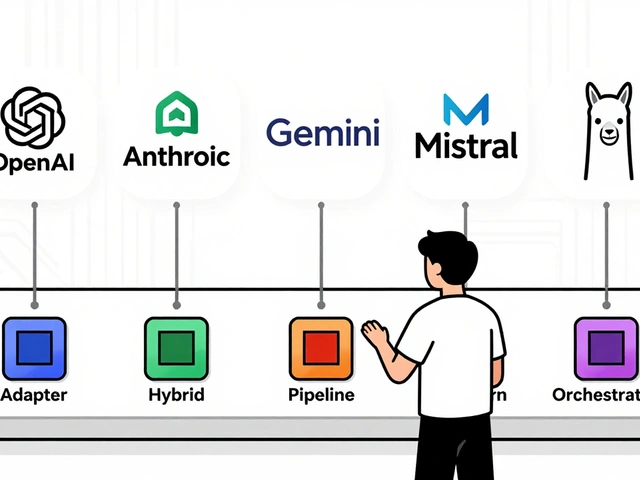

Explore proven techniques to prevent catastrophic forgetting in LLM fine-tuning. We analyze LoRA, EWC, FIP, and hybrid methods to help you preserve model knowledge.

Categories

Archives

Recent-posts

Customer Journey Personalization Using Generative AI: Real-Time Segmentation and Content

Mar, 17 2026

Artificial Intelligence

Artificial Intelligence