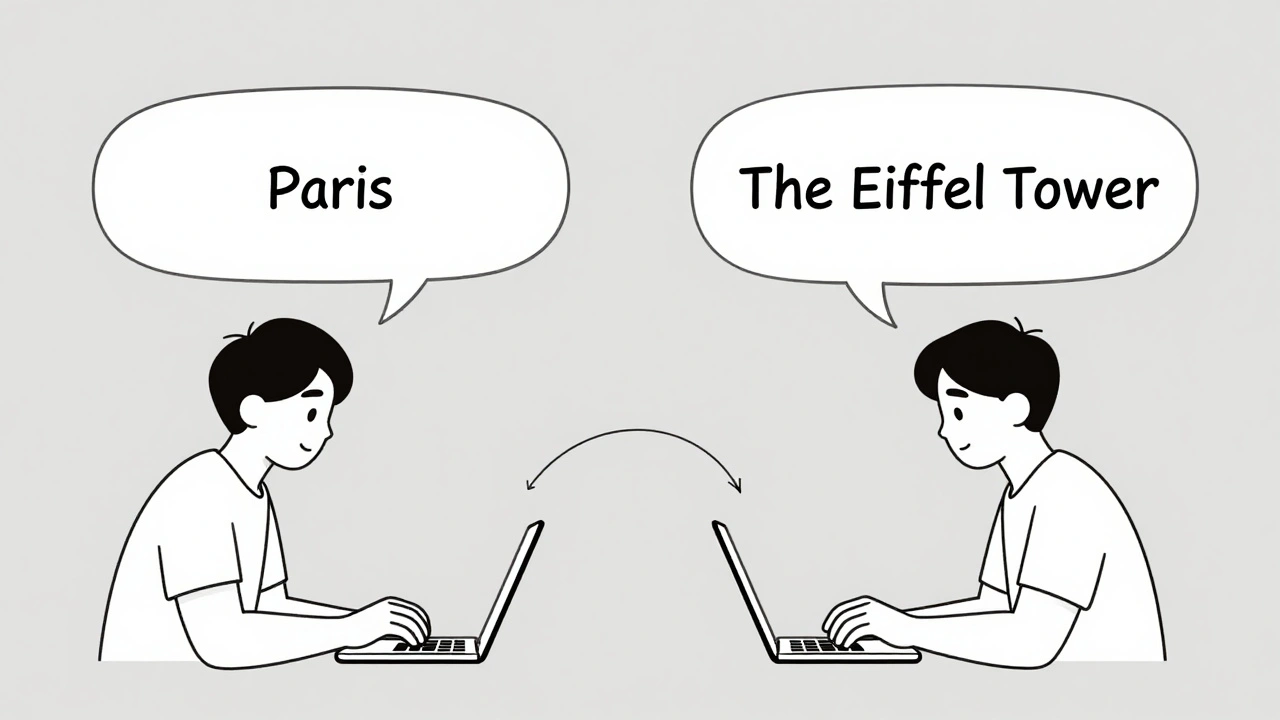

Tag: prompt sensitivity

Small changes in how you phrase a question can drastically alter an AI's response. Learn why prompt sensitivity makes LLMs unpredictable, how it breaks real applications, and proven ways to get consistent, reliable outputs.

Categories

Archives

Recent-posts

Human-in-the-Loop Operations for Generative AI: Review, Approval, and Exceptions Strategy Guide

Mar, 26 2026

Calibration and Outlier Handling in Quantized LLMs: How to Keep Accuracy When Compressing Models

Jul, 6 2025

Artificial Intelligence

Artificial Intelligence