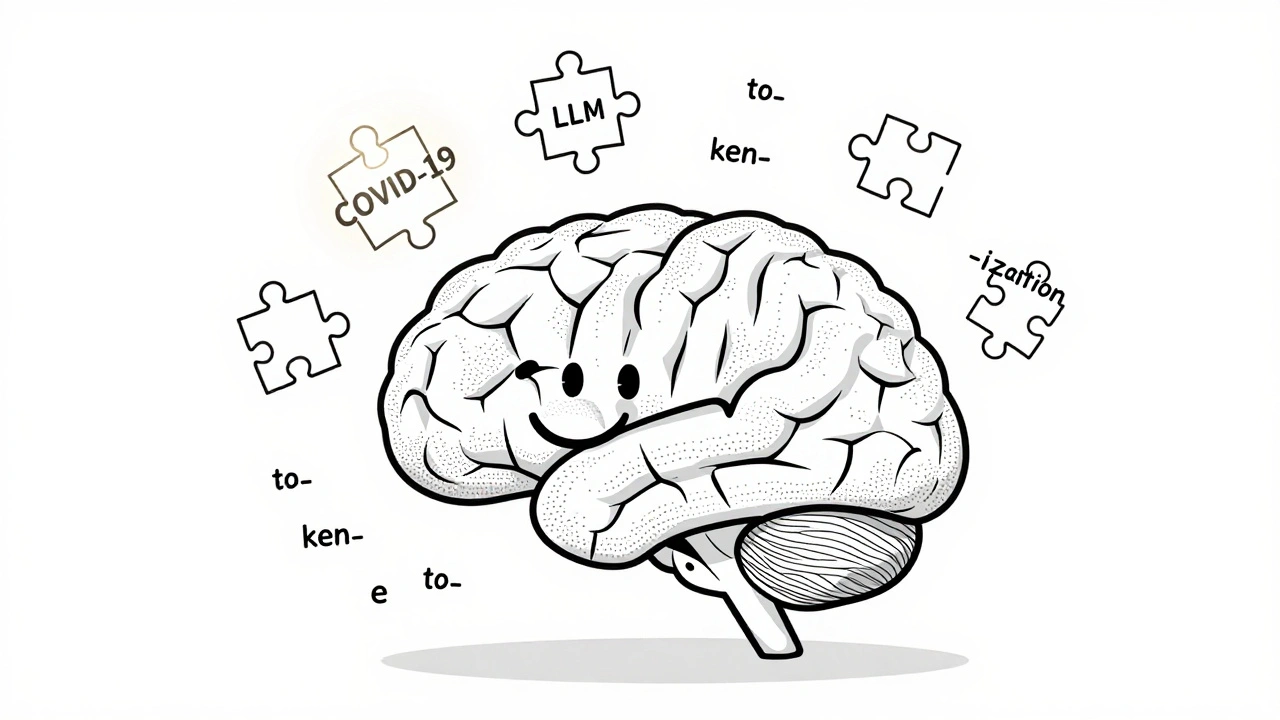

Tag: tokenization

Despite the rise of massive language models, tokenization remains essential for accuracy, efficiency, and cost control. Learn why subword methods like BPE and SentencePiece still shape how LLMs understand language.

Categories

Archives

Recent-posts

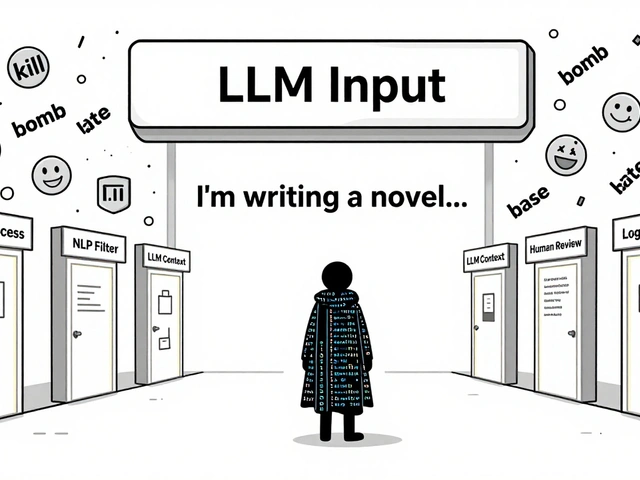

Content Moderation Pipelines for User-Generated Inputs to LLMs: How to Prevent Harmful Content in Real Time

Aug, 2 2025

Artificial Intelligence

Artificial Intelligence