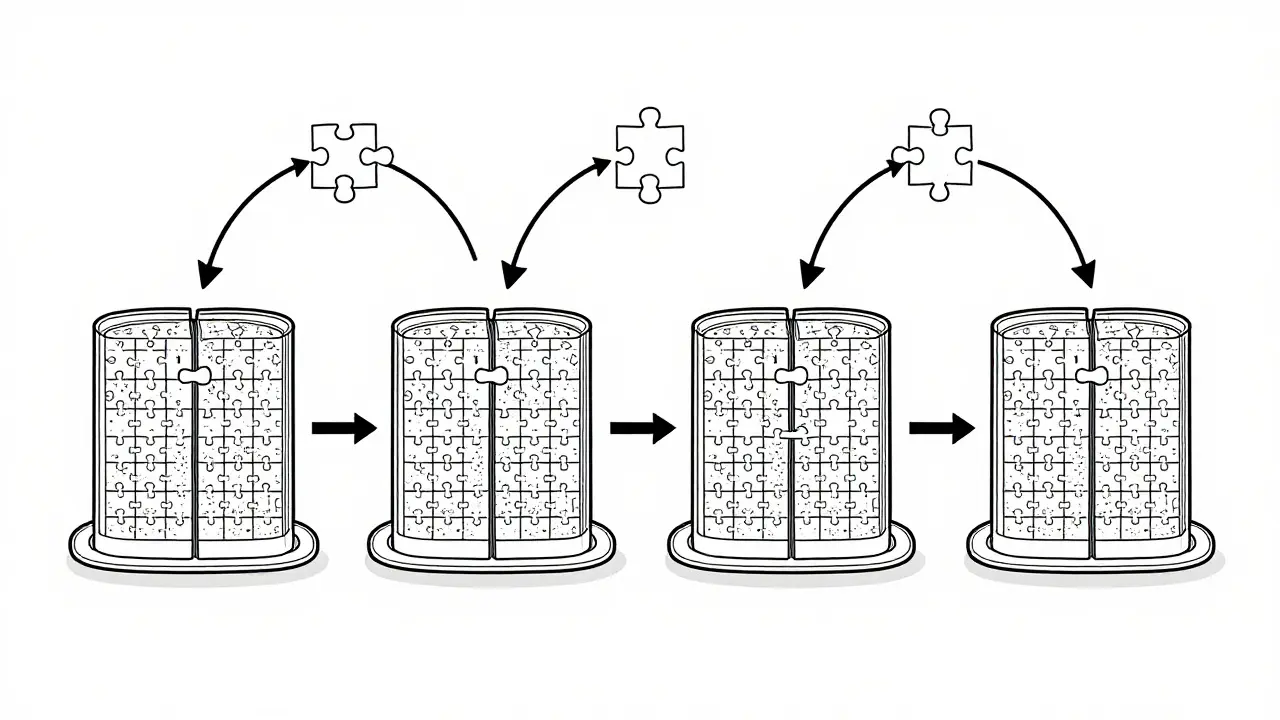

Tag: model parallelism

Tensor parallelism lets you run massive LLMs across multiple GPUs by splitting model layers. Learn how it works, why NVLink matters, which frameworks support it, and how to avoid common pitfalls in deployment.

Categories

Archives

Recent-posts

How to Optimize Your Contact Center with Generative AI: Summaries, Sentiment, and Routing

Apr, 19 2026

Artificial Intelligence

Artificial Intelligence