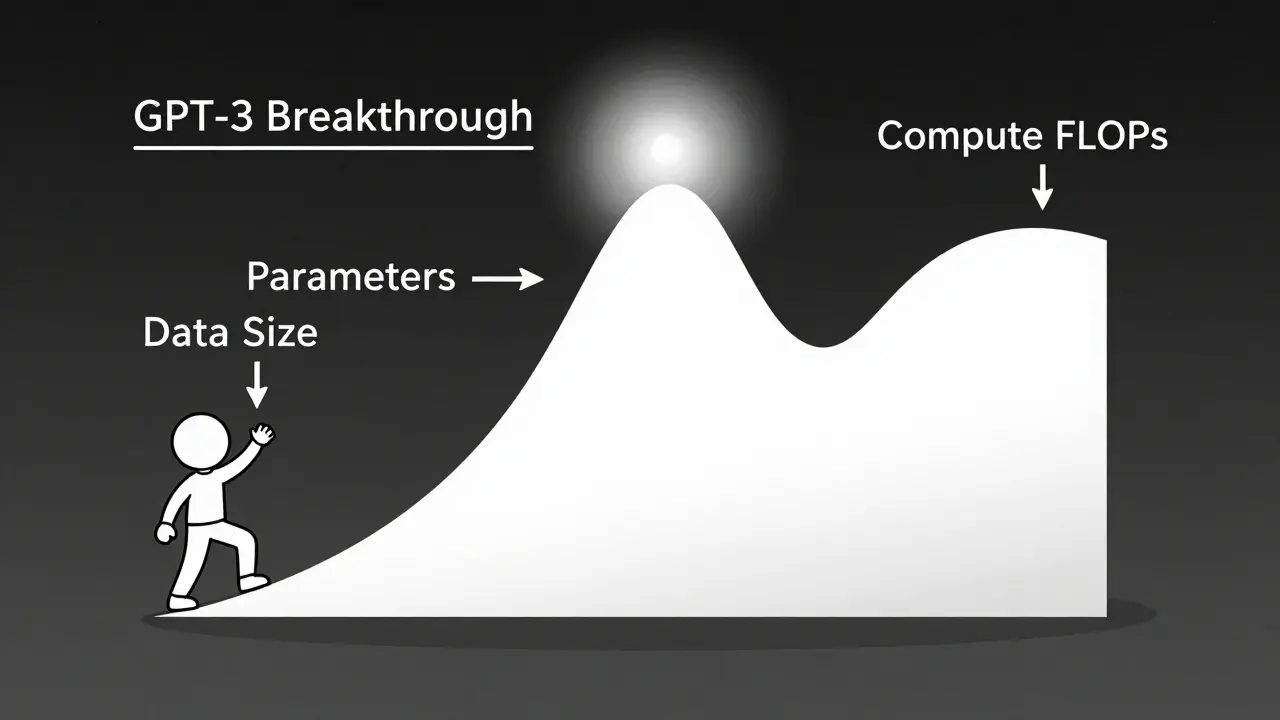

Tag: scaling laws

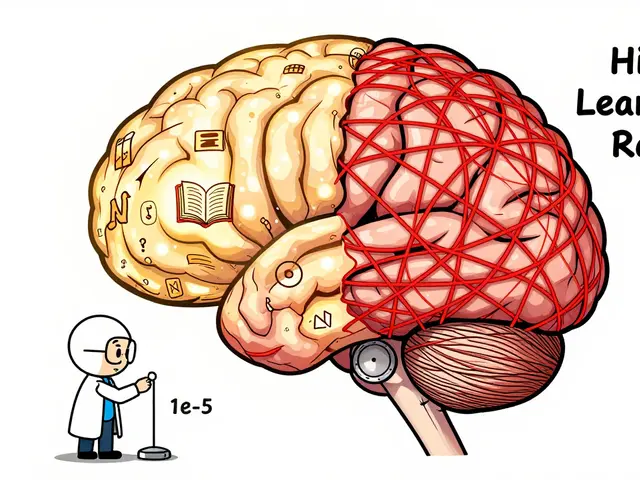

Scaling laws let you predict exactly how much performance improves when you increase model size, data, or compute. Learn how math, not just bigger models, drives AI breakthroughs-and why efficiency now beats raw scale.

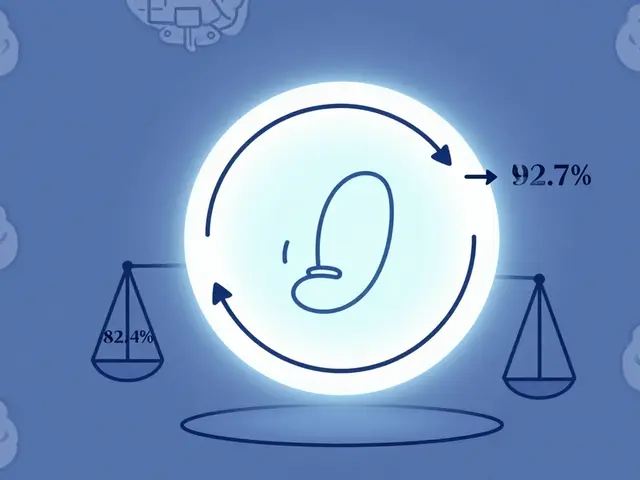

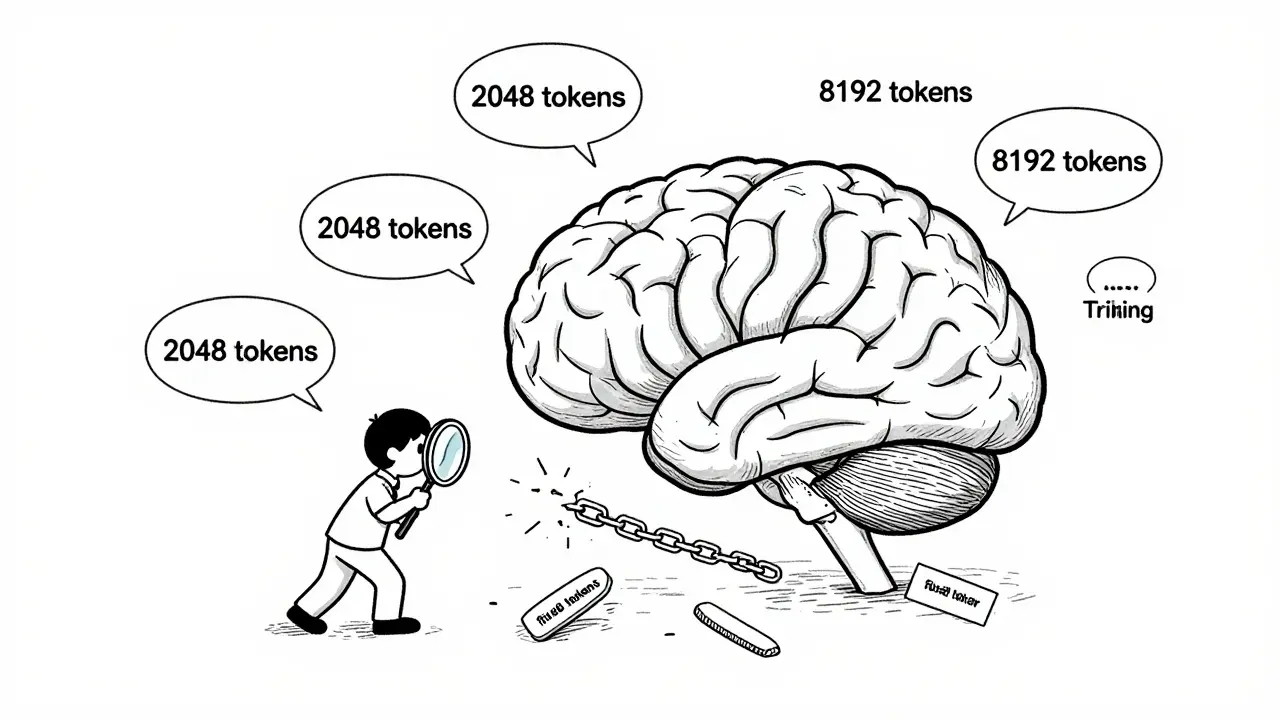

Training duration and token counts alone don't determine LLM generalization. How sequence lengths are structured during training matters more-variable-length curricula outperform fixed-length approaches, reduce costs, and unlock true reasoning ability.

Artificial Intelligence

Artificial Intelligence