Archive: 2025/10

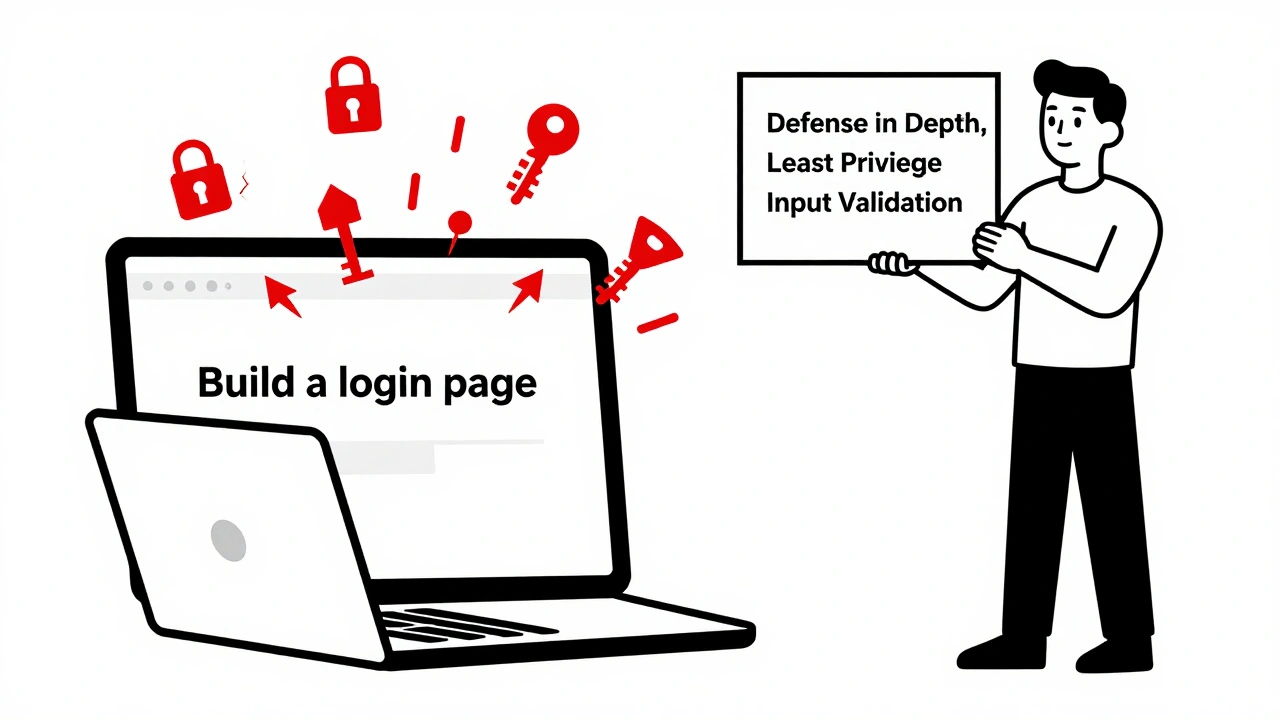

Vibe coding boosts development speed with AI-generated code, but introduces serious security and compliance risks. Learn how to use AI assistants like GitHub Copilot safely without sacrificing control or long-term maintainability.

Small changes in how you phrase a question can drastically alter an AI's response. Learn why prompt sensitivity makes LLMs unpredictable, how it breaks real applications, and proven ways to get consistent, reliable outputs.

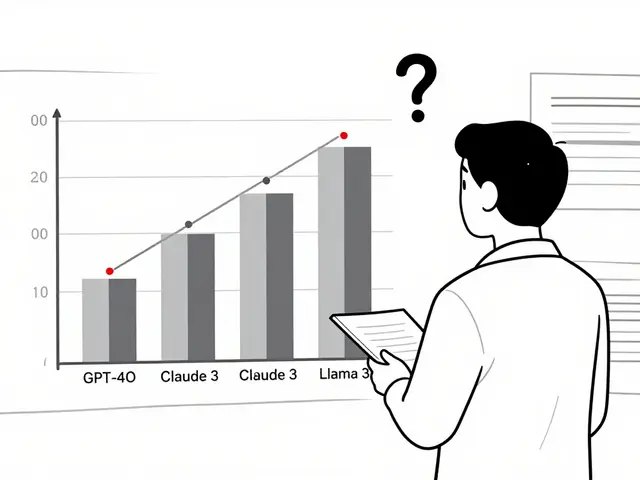

Domain-specialized LLMs like CodeLlama, Med-PaLM 2, and MathGLM outperform general AI in coding, medicine, and math. Learn how they work, their real-world accuracy, costs, and why they're replacing generic models in professional settings.

Learn how to use secure prompting to make AI-generated code safer. Discover proven templates, rules files, and techniques that reduce vulnerabilities by up to 68% in vibe coding workflows.

Categories

Archives

Recent-posts

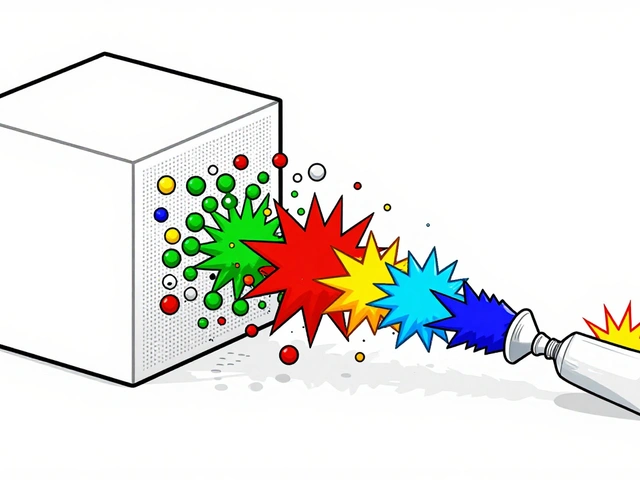

Calibration and Outlier Handling in Quantized LLMs: How to Keep Accuracy When Compressing Models

Jul, 6 2025

Artificial Intelligence

Artificial Intelligence