Archive: 2026/03 - Page 3

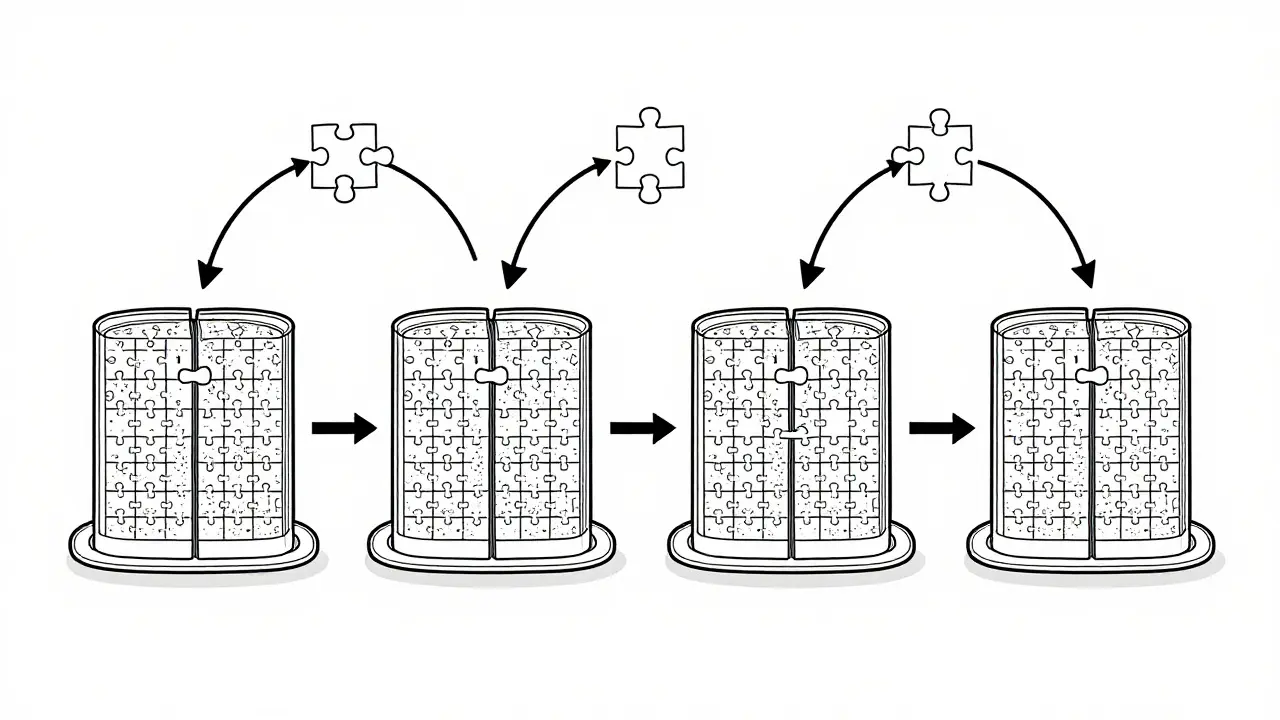

Tensor parallelism lets you run massive LLMs across multiple GPUs by splitting model layers. Learn how it works, why NVLink matters, which frameworks support it, and how to avoid common pitfalls in deployment.

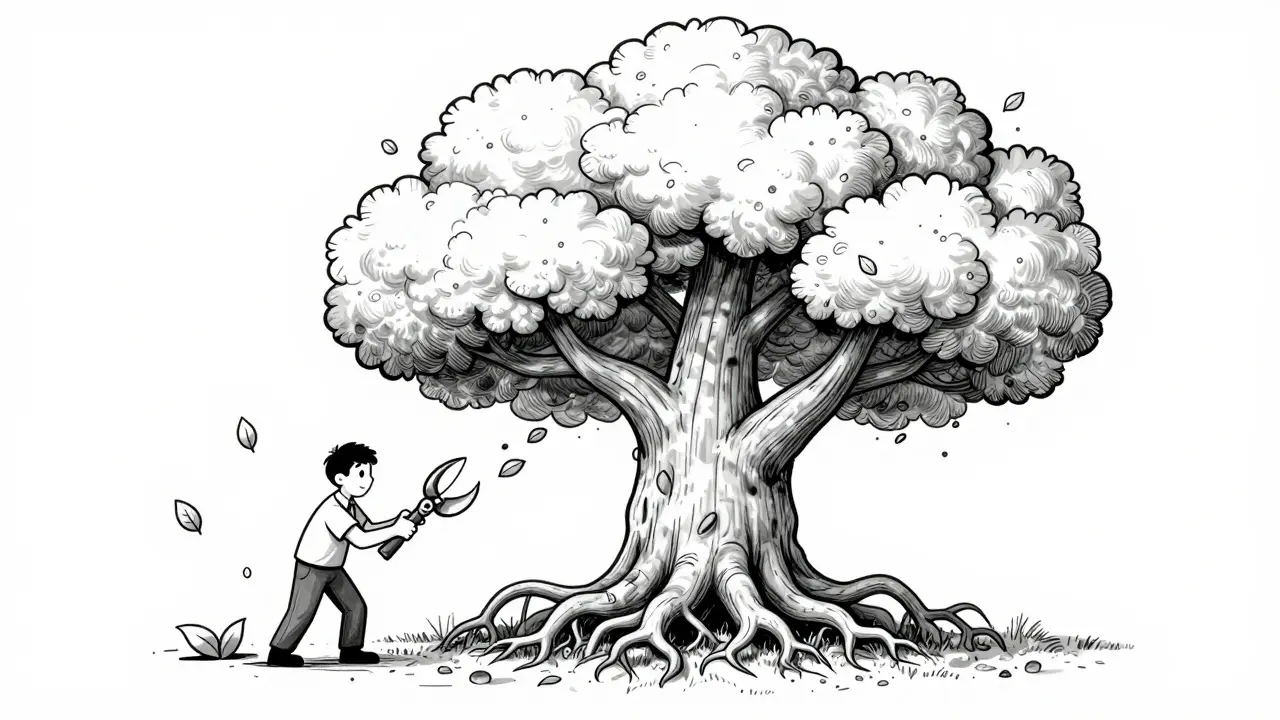

Combining pruning and quantization cuts LLM inference time by up to 6x while preserving accuracy. Learn how HWPQ's unified approach with FP8 and 2:4 sparsity delivers real-world speedups without hardware changes.

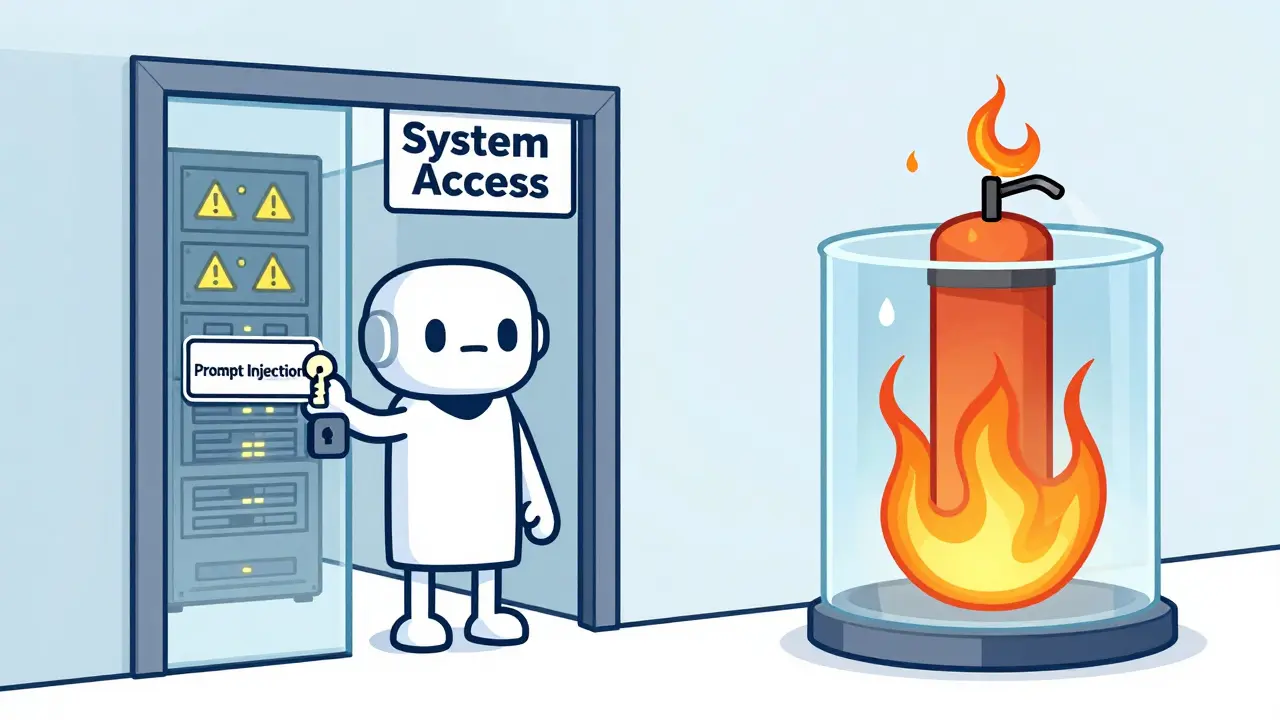

Sandboxing external actions in LLM agents prevents dangerous tool access by isolating processes. Firecracker, gVisor, and Nix offer different trade-offs between security and performance. Learn which method fits your use case.

Categories

Archives

Recent-posts

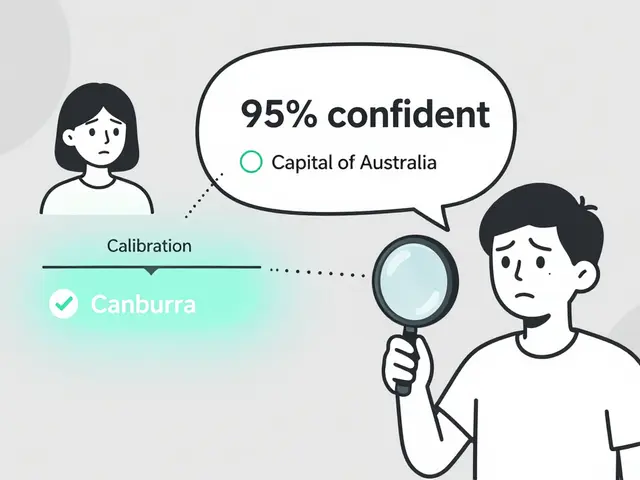

Token Probability Calibration in Large Language Models: How to Fix Overconfidence in AI Responses

Jan, 16 2026

Artificial Intelligence

Artificial Intelligence