Tag: QLoRA

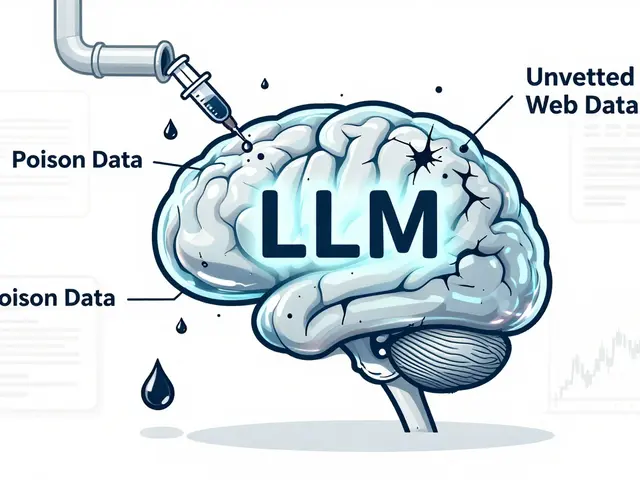

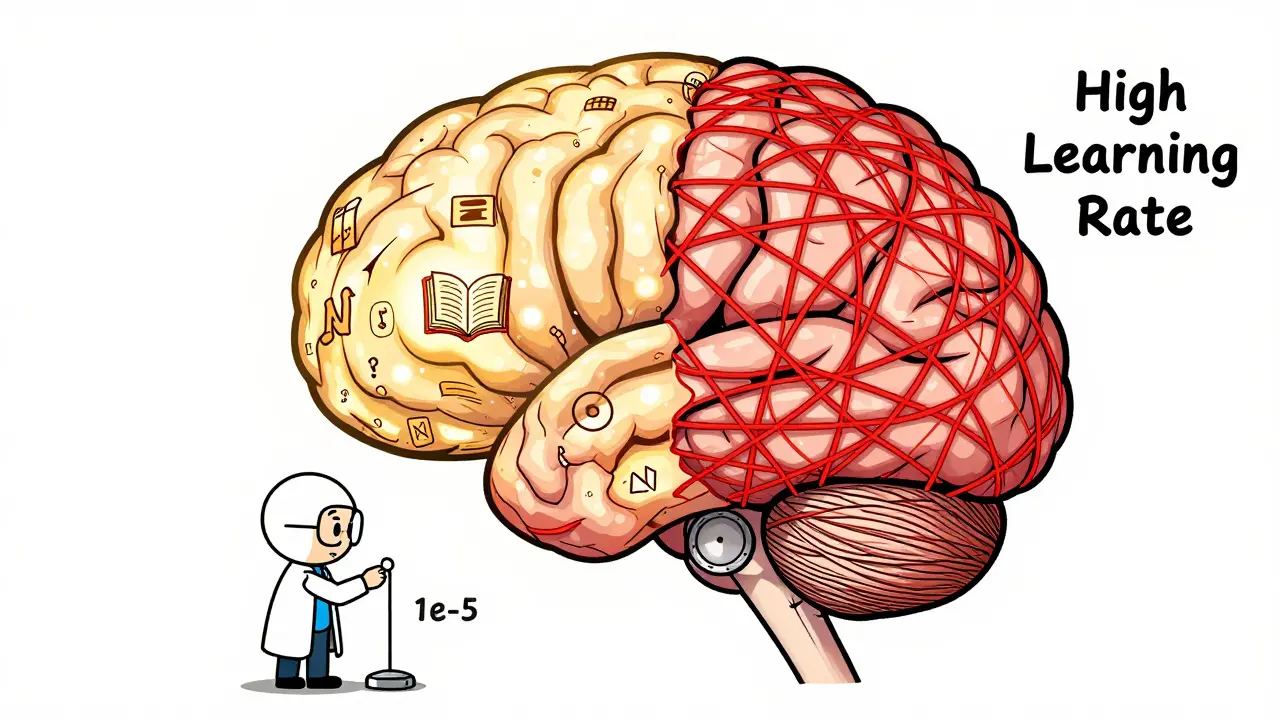

Learn how to fine-tune large language models without losing their original knowledge. Discover the best hyperparameters, methods like LoRA and FAPM, and real-world trade-offs that keep models accurate and reliable.

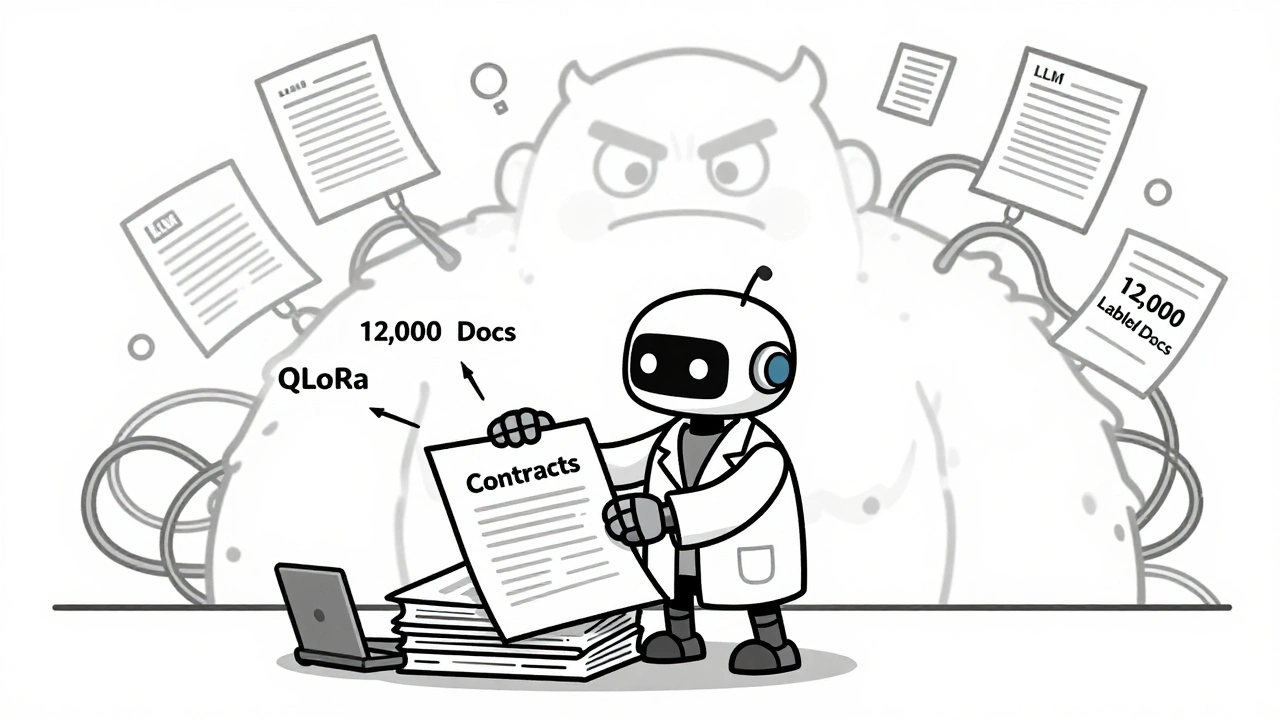

Few-shot fine-tuning lets you adapt large language models with as few as 50 examples, making AI usable in data-scarce fields like healthcare and law. Learn how LoRA and QLoRA make this possible-even on a single GPU.

Fine-tuned LLMs outperform general models in niche tasks like legal analysis, medical coding, and compliance. Learn how specialization beats scale, when to use QLoRA, and why hybrid RAG systems are the future.

Categories

Archives

Recent-posts

Human Oversight in Generative AI: Review Workflows and Escalation Policies That Actually Work

Mar, 24 2026

Artificial Intelligence

Artificial Intelligence