Archive: 2026/02 - Page 2

Few-shot fine-tuning lets you adapt large language models with as few as 50 examples, making AI usable in data-scarce fields like healthcare and law. Learn how LoRA and QLoRA make this possible-even on a single GPU.

Non-developers are building apps faster than ever using AI tools-but most don’t know how to secure them. Learn the three rules to avoid breaches, reduce risk, and ship safe vibe-coded apps without writing a line of code.

Prompt robustness ensures AI models handle typos, rephrasings, and messy inputs without crashing. Learn how MOF, RoP, and keyword choices make LLMs more reliable in real-world use.

This article explains how AI-generated dark patterns manipulate users, with real examples and regulations. Learn a 5-step ethical audit to prevent deceptive designs and protect digital trust.

Discover why traditional metrics fail for compressed LLMs and how modern protocols like LLM-KICK and EleutherAI LM Harness accurately assess performance. Learn key dimensions, implementation steps, and future trends in model compression evaluation.

Discover how rotary embeddings, ALiBi, and memory mechanisms enable AI models to handle up to 1 million tokens. Learn key differences, real-world impacts, and future trends in long-context AI.

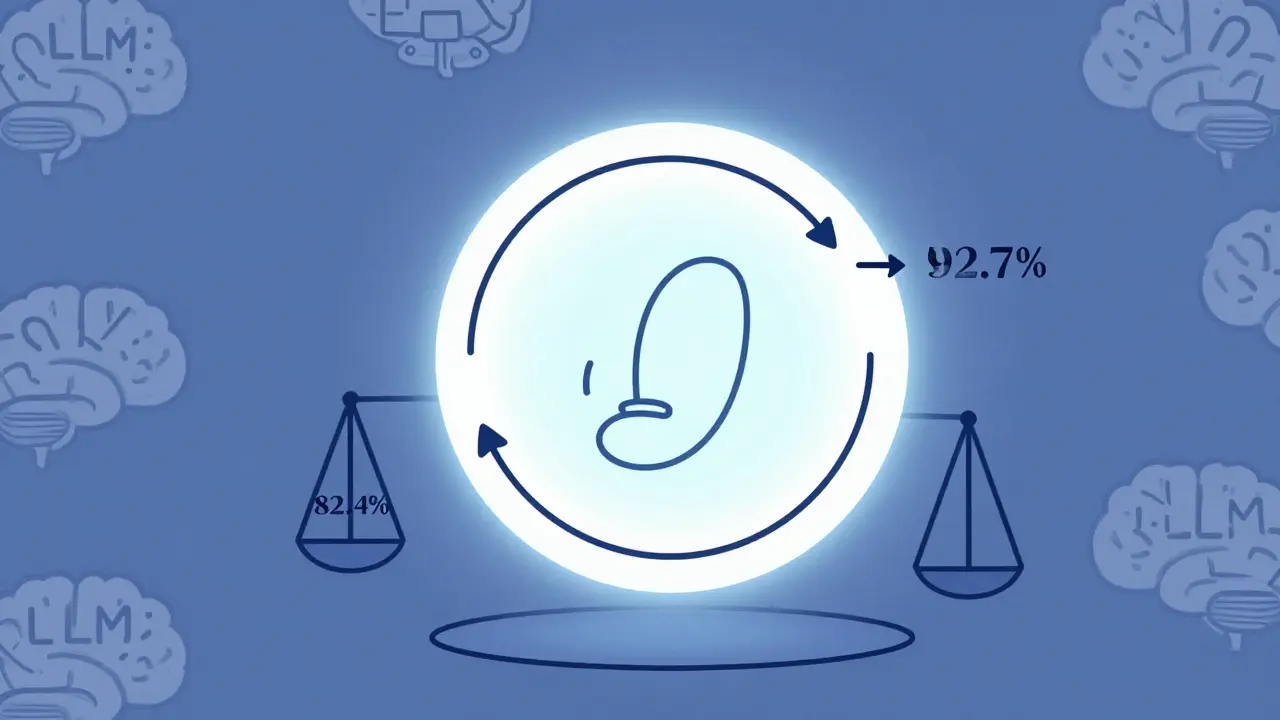

Reinforcement Learning from Prompts (RLfP) automates prompt optimization using feedback loops, boosting LLM accuracy by up to 10% on key benchmarks. Learn how PRewrite and PRL work, their real-world gains, hidden costs, and who should use them.

Generative AI can now describe images for alt text, helping make the web more accessible. But accuracy gaps, especially for people with disabilities, mean human review is still essential.

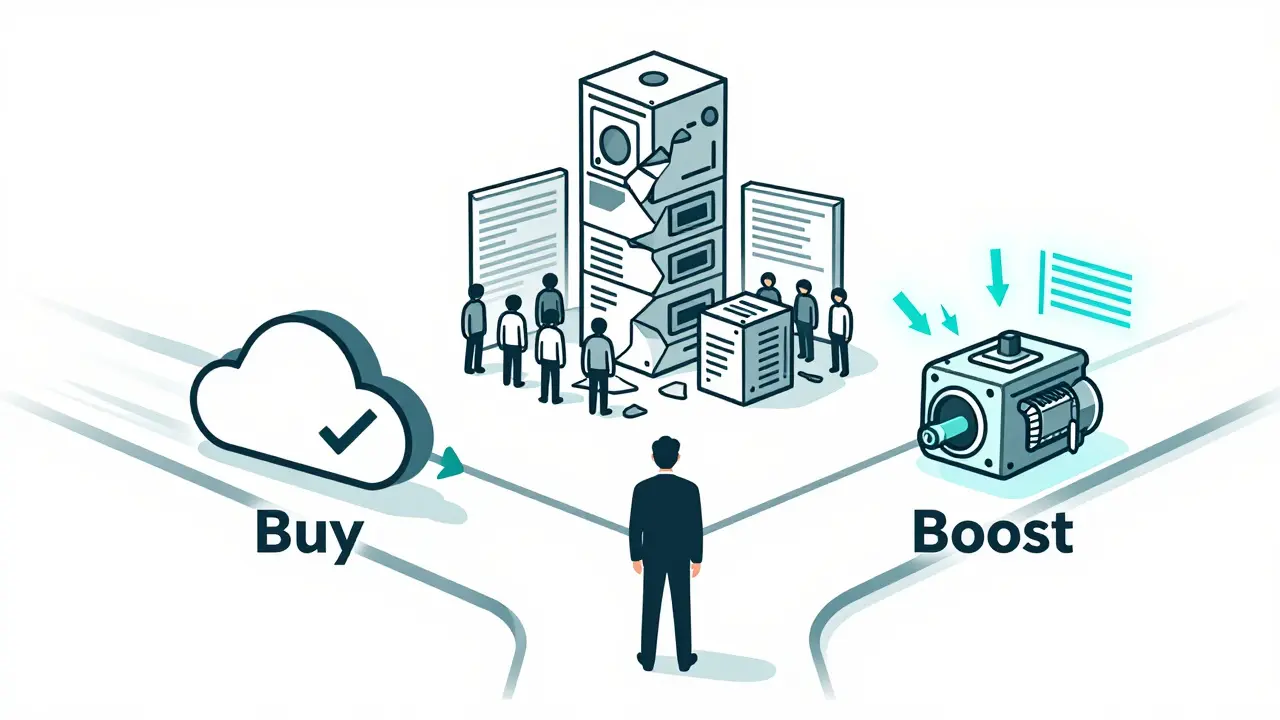

A practical guide for CIOs on choosing between building or buying generative AI platforms. Learn when to buy, boost, or build based on cost, risk, speed, and business impact - backed by 2024-2025 enterprise data.

Artificial Intelligence

Artificial Intelligence