Author: Phillip Ramos - Page 10

Large language models are transforming localization by understanding context, tone, and culture - not just words. Learn how they outperform traditional translation tools and what it takes to use them safely and effectively.

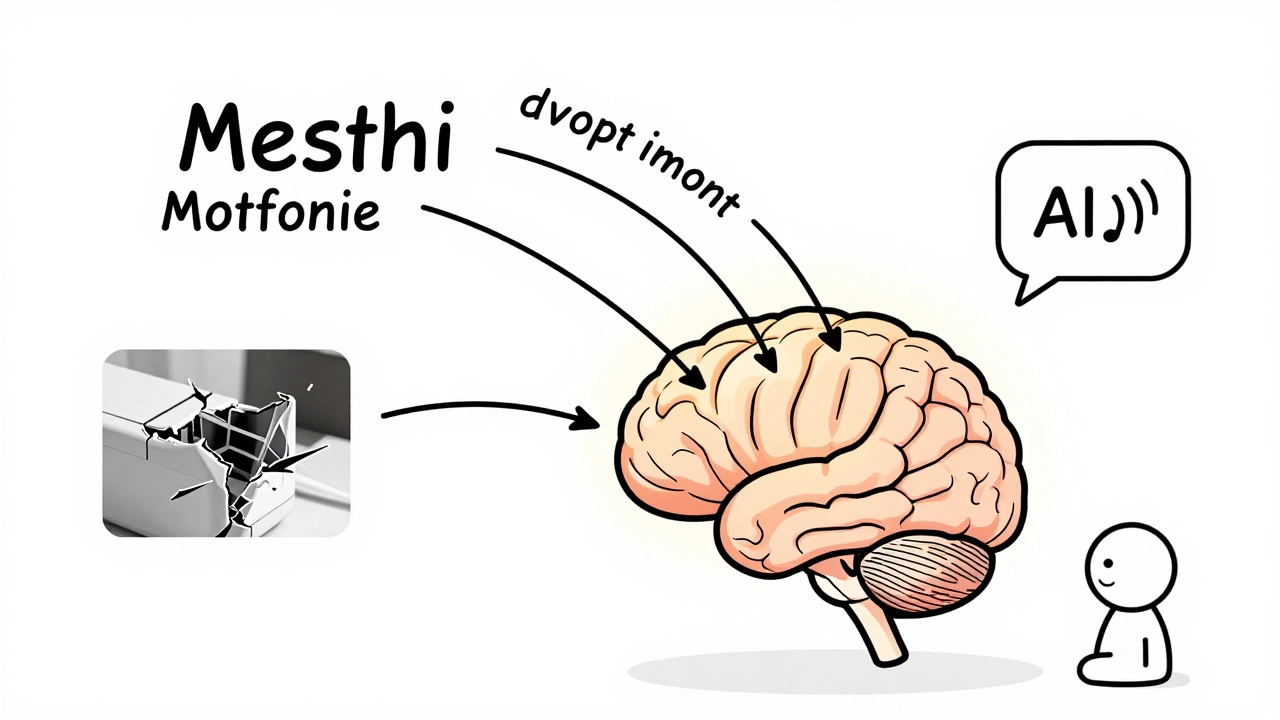

Multimodal AI understands text, images, audio, and video together-making it far more accurate than text-only systems. Learn how it's transforming healthcare, customer service, and retail with real-world results.

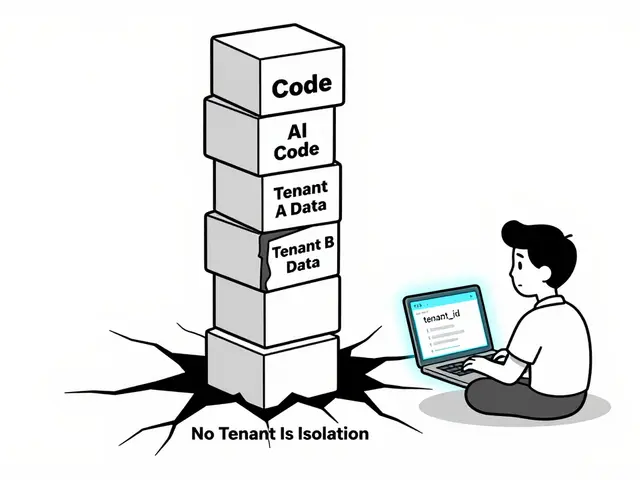

Vibe coding boosts development speed with AI-generated code, but introduces serious security and compliance risks. Learn how to use AI assistants like GitHub Copilot safely without sacrificing control or long-term maintainability.

Small changes in how you phrase a question can drastically alter an AI's response. Learn why prompt sensitivity makes LLMs unpredictable, how it breaks real applications, and proven ways to get consistent, reliable outputs.

Domain-specialized LLMs like CodeLlama, Med-PaLM 2, and MathGLM outperform general AI in coding, medicine, and math. Learn how they work, their real-world accuracy, costs, and why they're replacing generic models in professional settings.

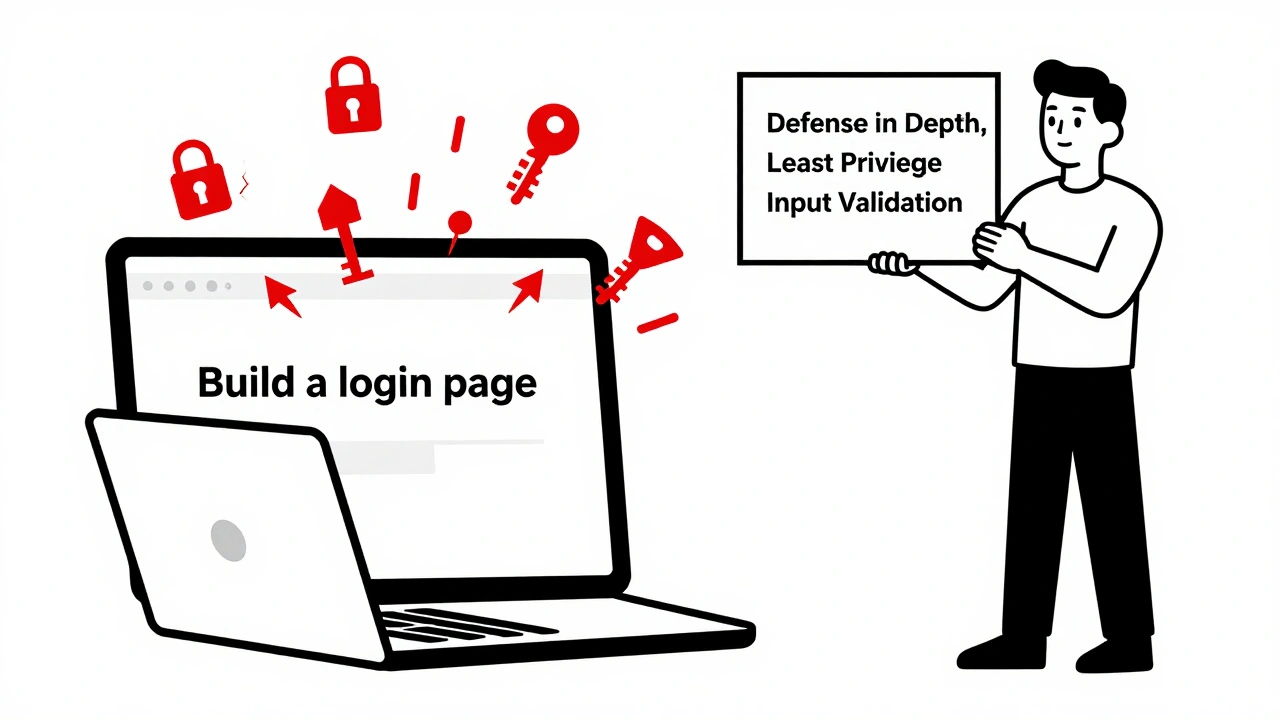

Learn how to use secure prompting to make AI-generated code safer. Discover proven templates, rules files, and techniques that reduce vulnerabilities by up to 68% in vibe coding workflows.

Learn what to allow, limit, and prohibit in AI-assisted vibe coding policies to prevent security breaches, ensure compliance, and keep your team productive in 2025.

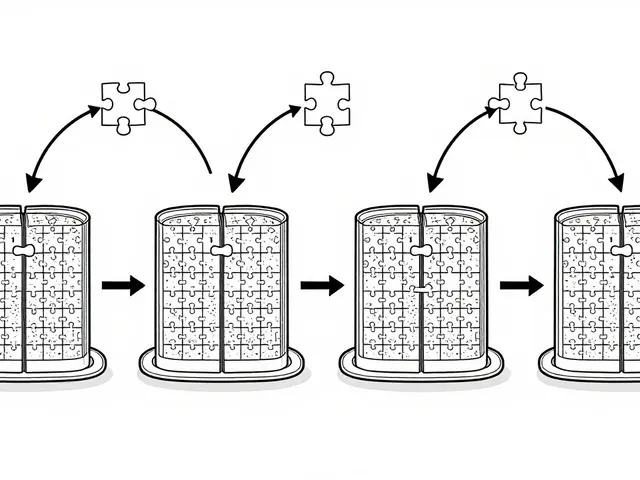

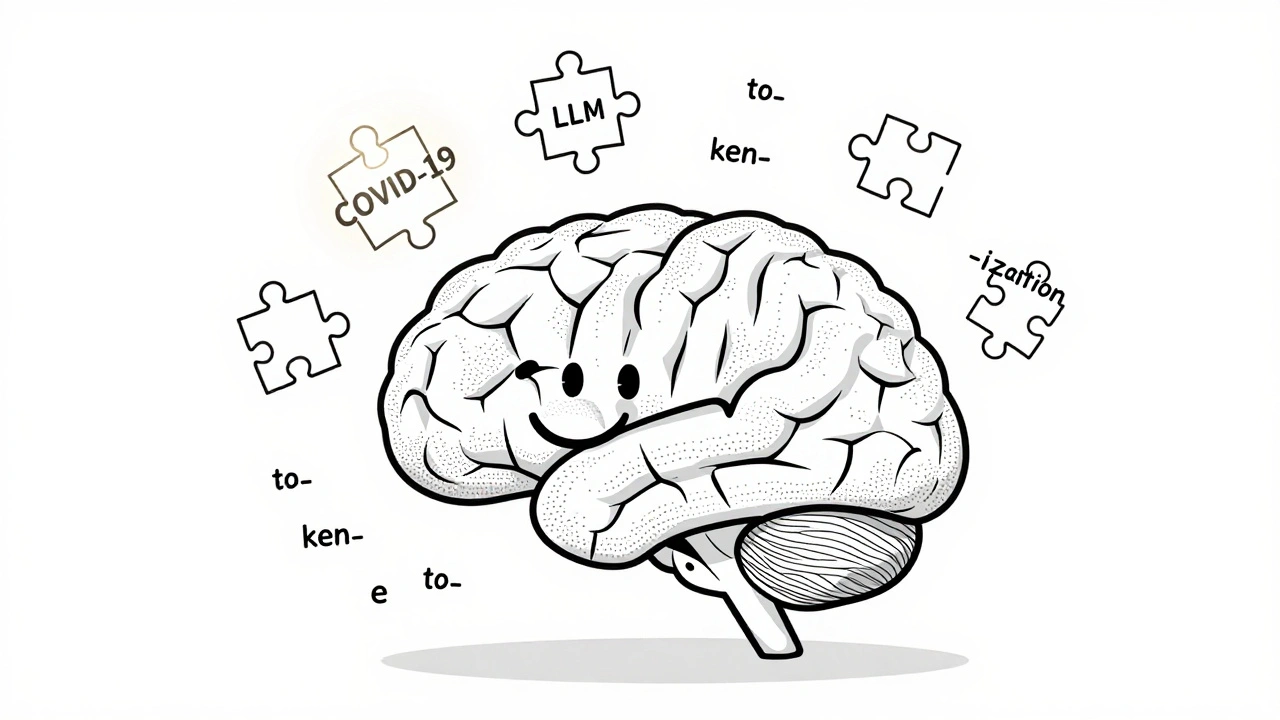

Despite the rise of massive language models, tokenization remains essential for accuracy, efficiency, and cost control. Learn why subword methods like BPE and SentencePiece still shape how LLMs understand language.

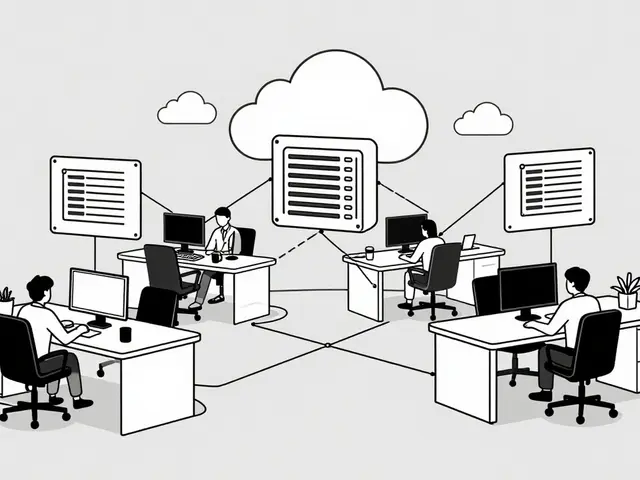

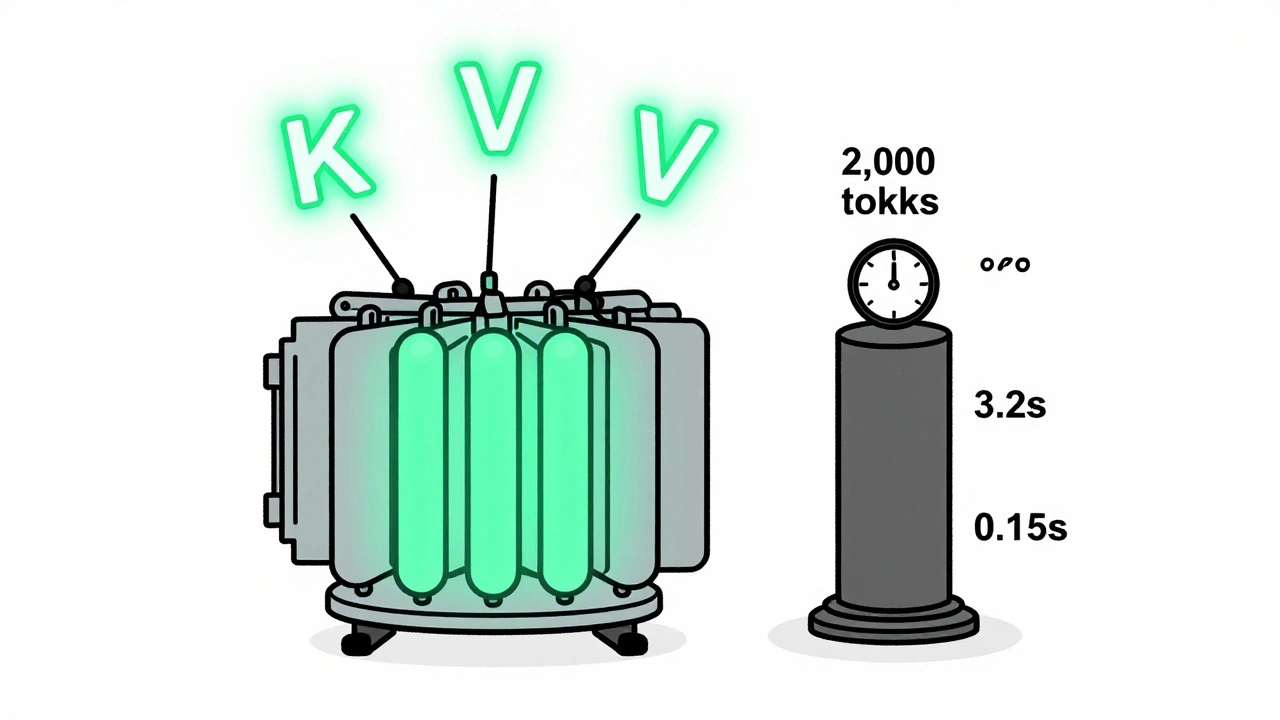

KV caching and continuous batching are essential for fast, affordable LLM serving. Learn how they reduce memory use, boost throughput, and enable real-world deployment on consumer hardware.

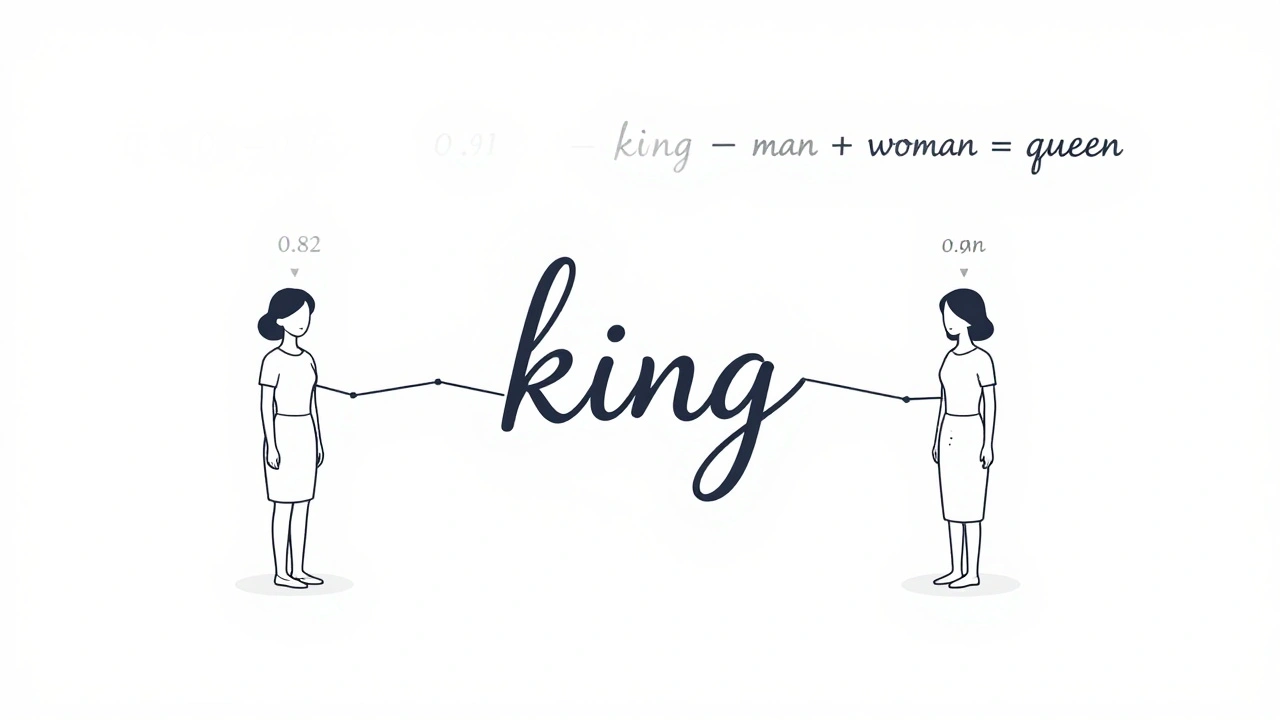

Learn how embeddings, attention, and feedforward networks form the core of modern large language models like GPT and Llama. No jargon, just clear explanations of how AI understands and generates human language.

Government agencies are now procuring AI coding tools with strict SLAs and compliance requirements. Learn how contracts for AI CaaS differ from commercial tools, what SLAs are mandatory, and how agencies are avoiding costly mistakes in 2025.

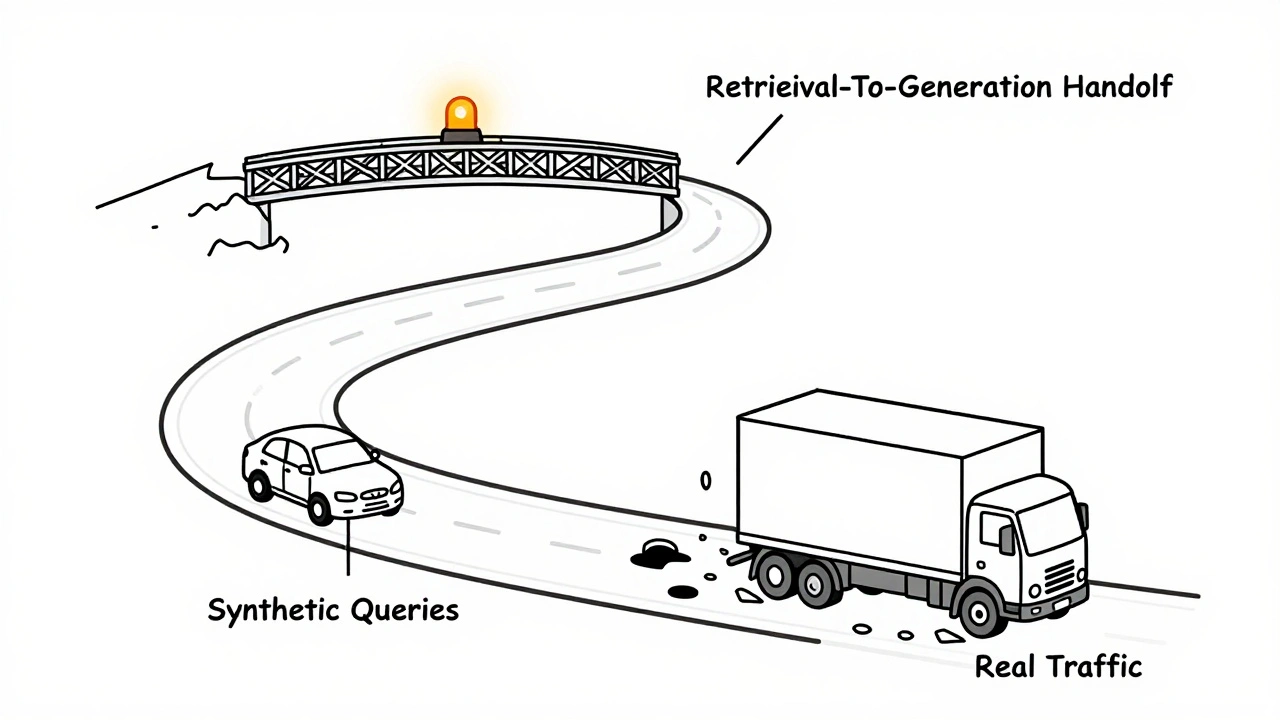

Testing RAG pipelines requires both synthetic queries and real traffic monitoring. Learn how to measure retrieval, generation, cost, and latency-and turn production failures into better tests.

Categories

Archives

Recent-posts

Key Components of Large Language Models: Embeddings, Attention, and Feedforward Networks Explained

Sep, 1 2025

Artificial Intelligence

Artificial Intelligence