Author: Phillip Ramos - Page 4

Explore proven techniques to prevent catastrophic forgetting in LLM fine-tuning. We analyze LoRA, EWC, FIP, and hybrid methods to help you preserve model knowledge.

Explore how to secure data in AI-generated apps. Learn critical classification tiers, avoid PII leaks, and manage secrets safely in vibe coding workflows.

Explore real-world Generative AI uses in 2026, covering healthcare, finance, and manufacturing. Learn practical implementation strategies, cost risks, and ROI metrics.

Explore key vibe coding adoption metrics, tool comparisons, and 2025 industry statistics. Learn about GitHub Copilot, Cursor, and security risks shaping the future of software development.

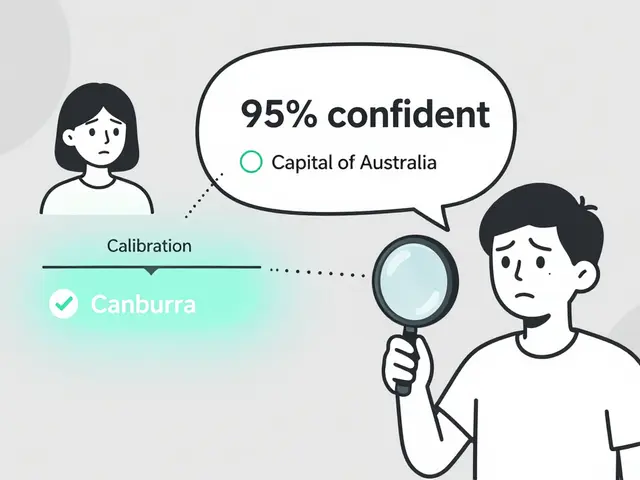

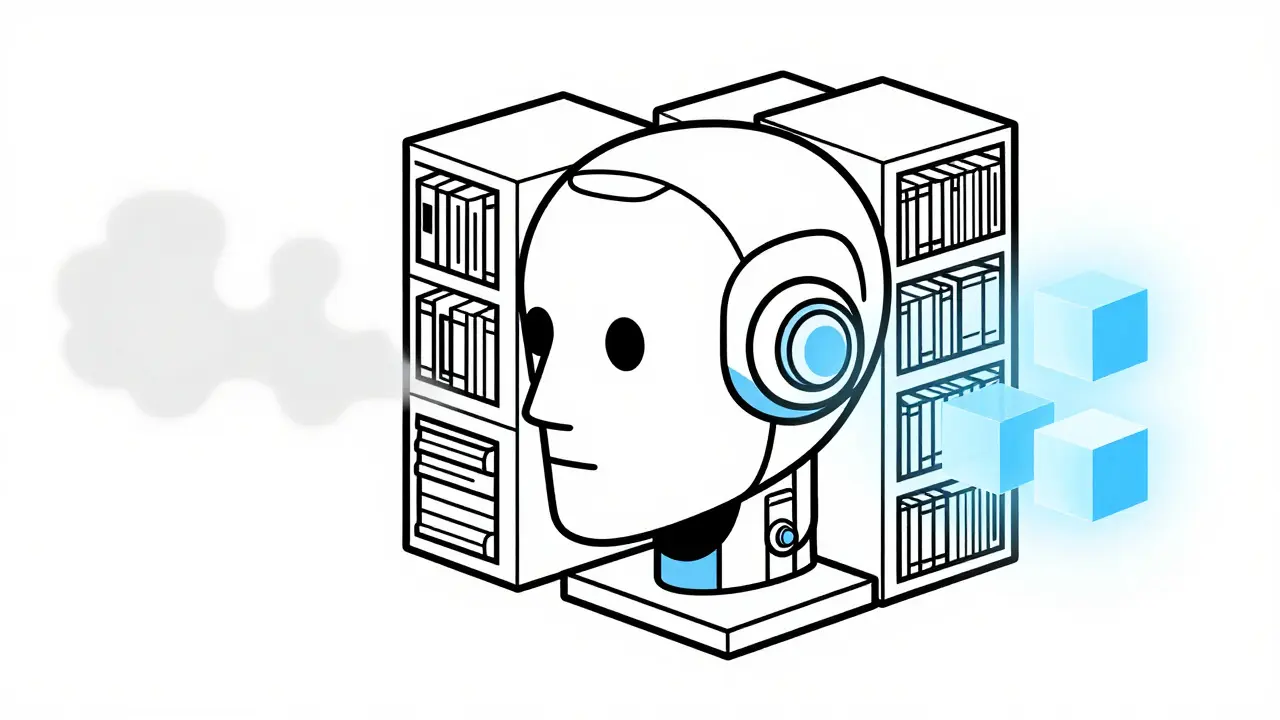

Learn how Retrieval-Augmented Generation (RAG) solves AI hallucinations by grounding responses in verified data. Covers technical architecture, cost comparisons with fine-tuning, and implementation best practices.

Explore why vibe coding prioritizes outcome validation over line-by-line comprehension. Learn how AI-generated code shifts developer roles from authors to directors.

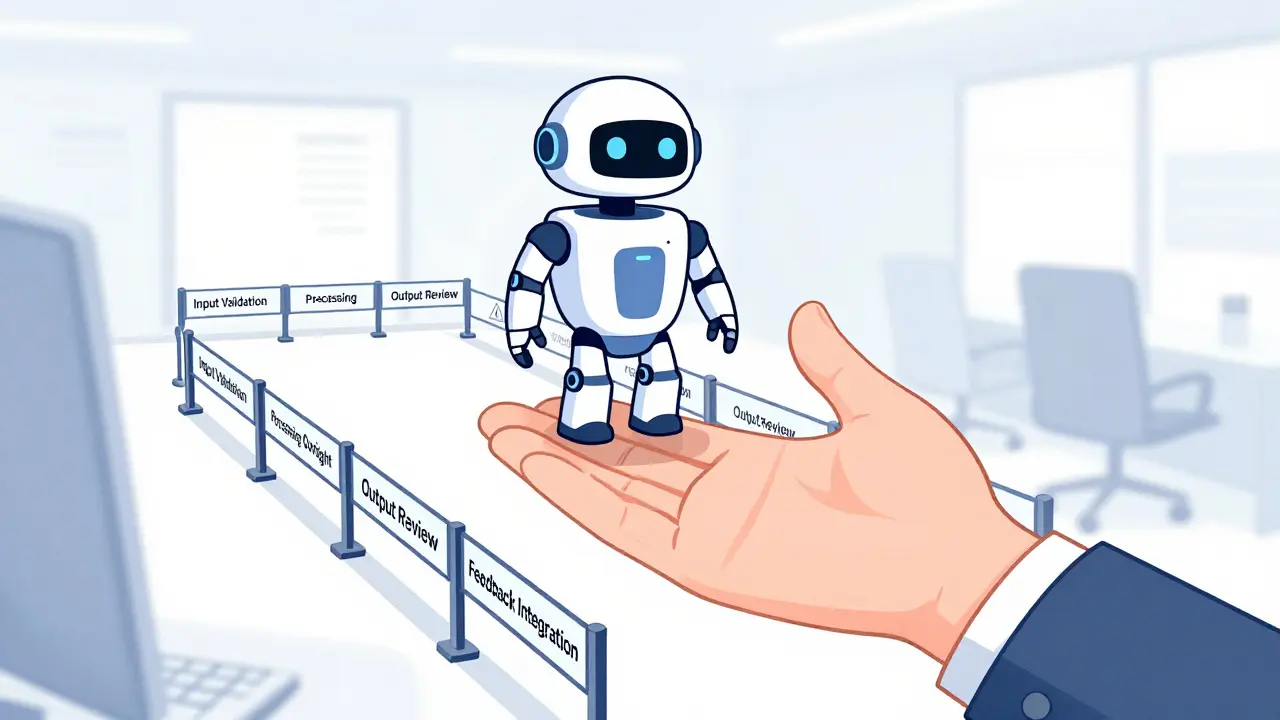

Implementing human-in-the-loop systems ensures safe generative AI deployment. Learn how to set approval workflows, manage exceptions, and balance automation with quality control using proven strategies.

Explore the conflicting data on vibe coding adoption in 2026. Learn what questions to ask in developer sentiment surveys to uncover real productivity gains, security risks, and trust levels.

Human oversight in generative AI isn't about slowing things down-it's about preventing costly mistakes. Learn how structured review workflows and risk-based escalation policies keep AI accurate, ethical, and accountable.

Discover which project types benefit most from AI-generated code. From CRUD apps to API integrations, learn where vibe coding saves time - and where it falls short.

Generative AI ethics require more than rules - they demand transparency, stakeholder involvement, and real accountability. Learn how universities, researchers, and institutions are building ethical frameworks that actually work in 2026.

Tiered governance for vibe-coded apps matches control intensity to risk, letting teams build fast without sacrificing safety. It replaces rigid policies with smart, automated checks that scale with impact.

Artificial Intelligence

Artificial Intelligence