Category: Artificial Intelligence - Page 4

Generative AI can now describe images for alt text, helping make the web more accessible. But accuracy gaps, especially for people with disabilities, mean human review is still essential.

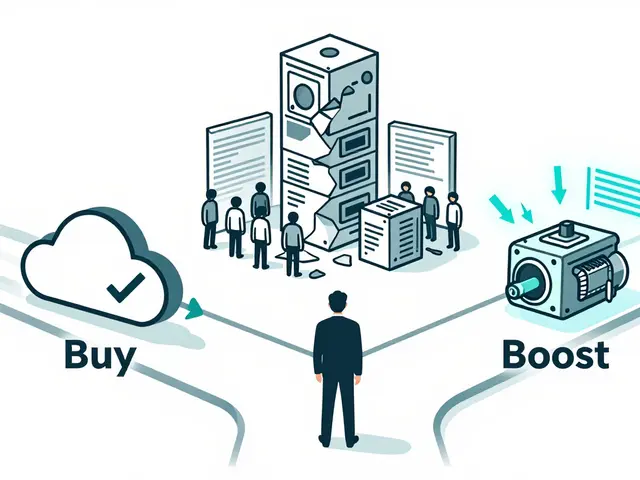

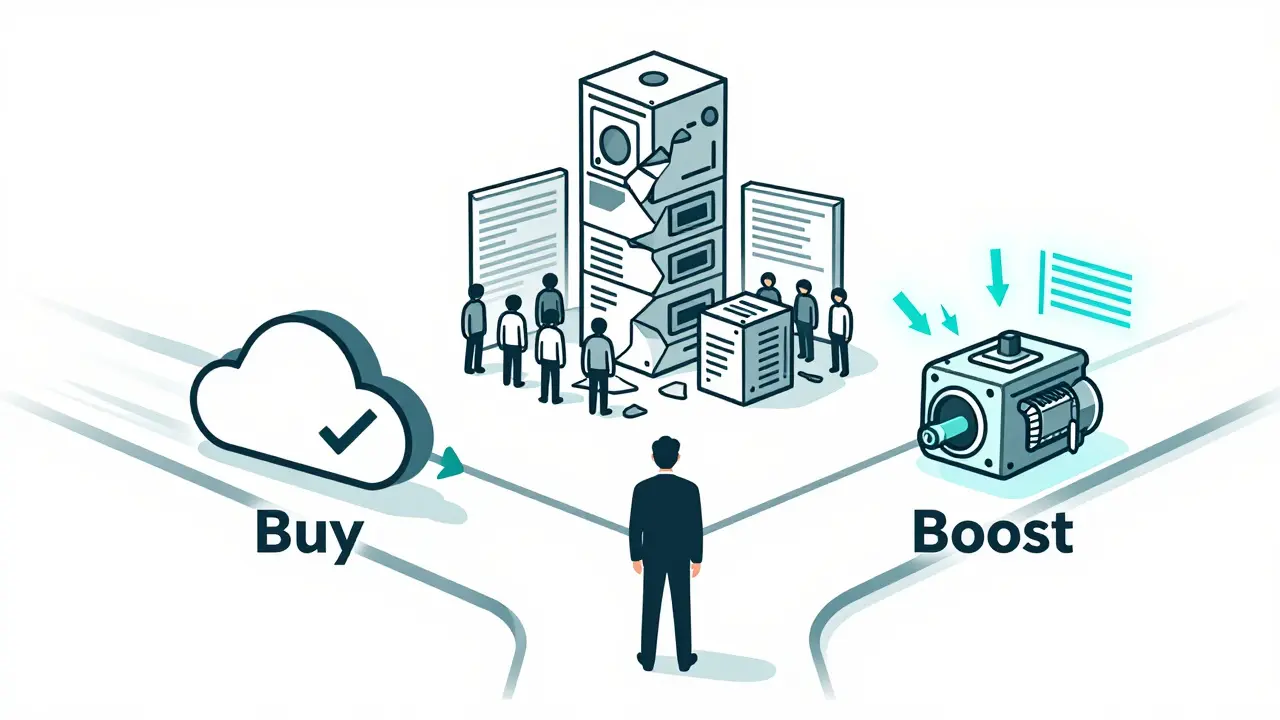

A practical guide for CIOs on choosing between building or buying generative AI platforms. Learn when to buy, boost, or build based on cost, risk, speed, and business impact - backed by 2024-2025 enterprise data.

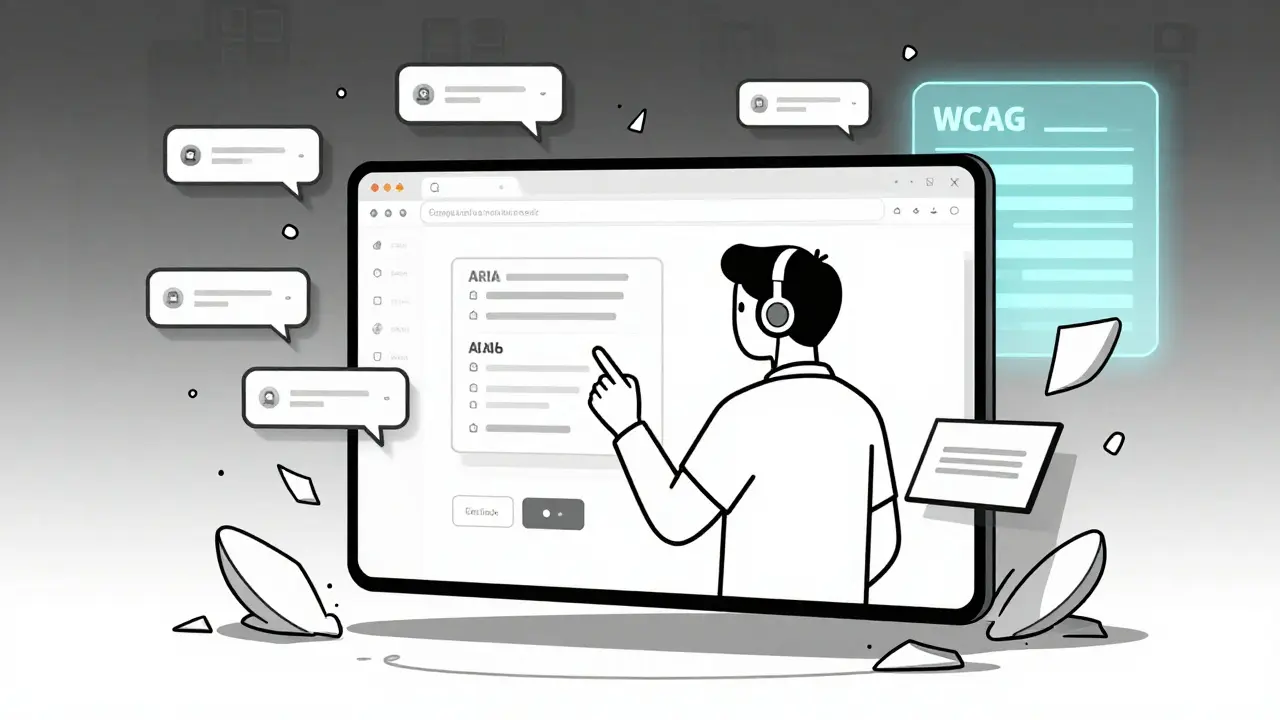

AI-generated interfaces are breaking web accessibility standards at scale. WCAG wasn’t built for dynamic AI content, and real users are paying the price. Here’s why compliance is failing-and what actually works.

LLMs memorize personal data, making traditional privacy methods useless. Learn the seven core principles and practical controls-like differential privacy and PII detection-that actually protect user data in 2026.

Modern generative AI isn't powered by bigger models anymore-it's built on smarter architectures. Discover how MoE, verifiable reasoning, and hybrid systems are making AI faster, cheaper, and more reliable in 2025.

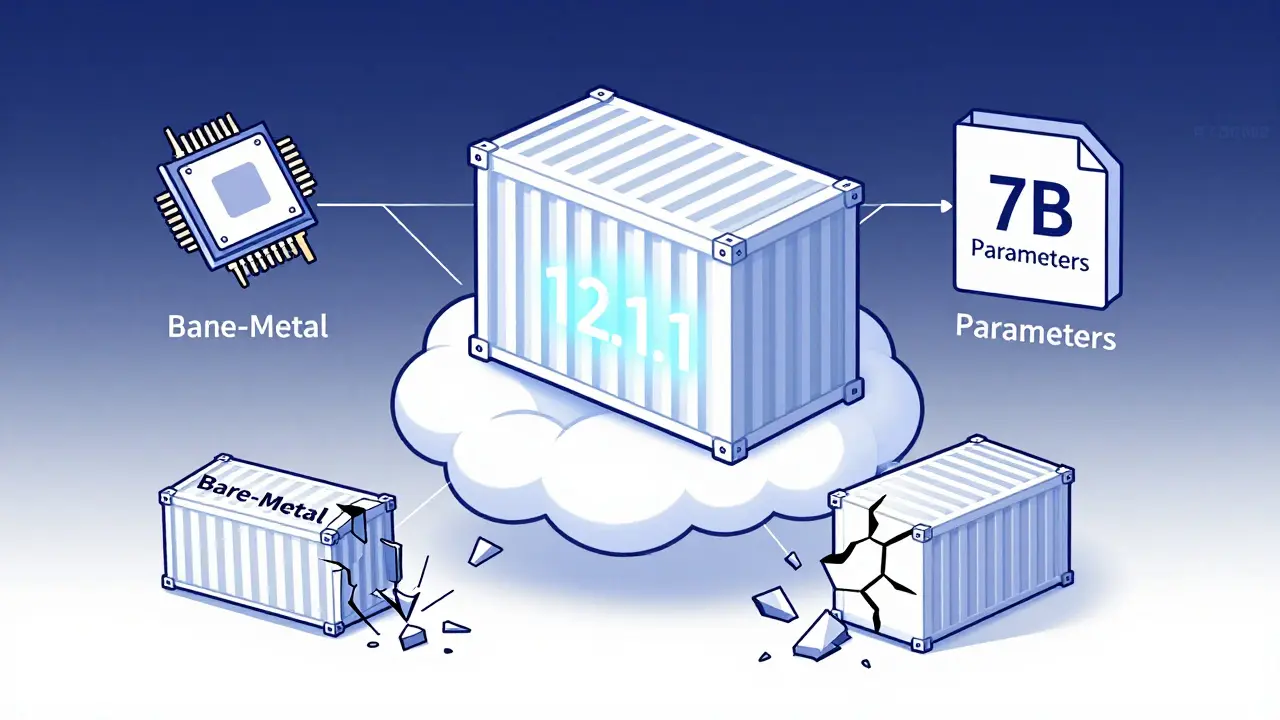

Containerizing large language models requires precise CUDA version matching, optimized Docker images, and secure model formats like .safetensors. Learn how to reduce startup time, shrink image size, and avoid the most common deployment failures.

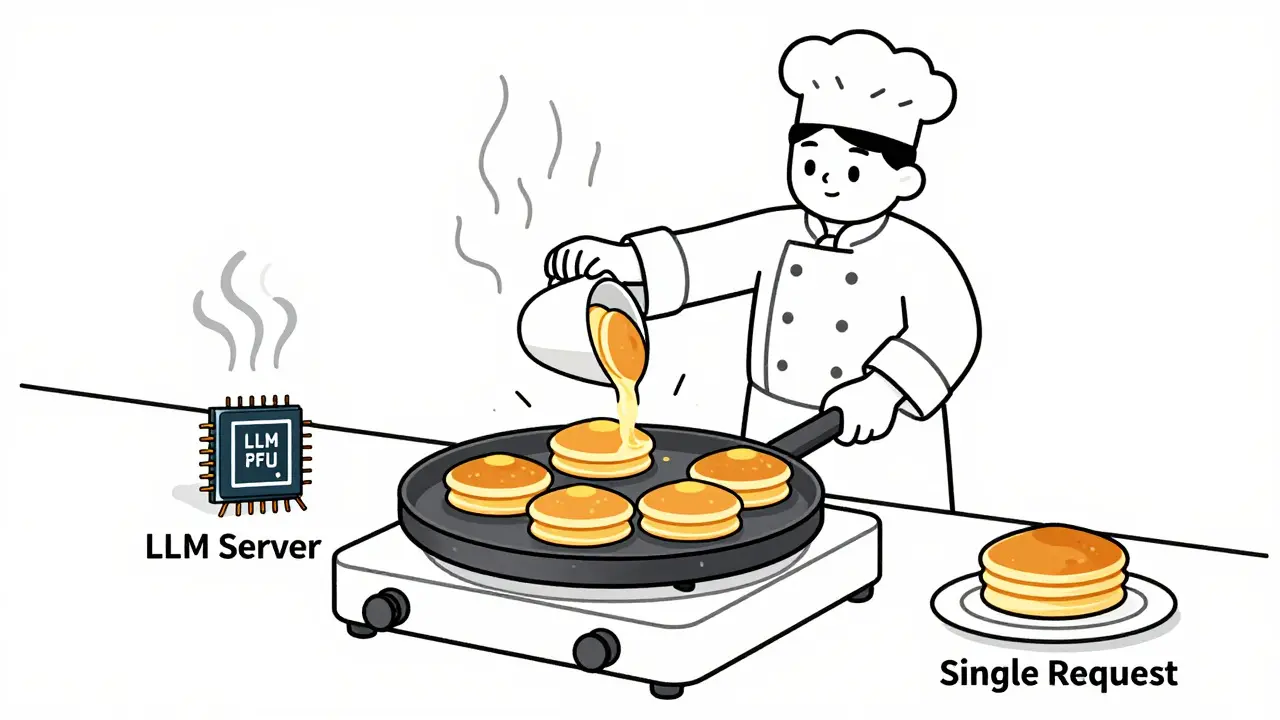

Learn how to choose optimal batch sizes for LLM serving to cut cost per token by up to 87%. Discover real-world results, batching types, hardware trade-offs, and proven techniques to reduce AI infrastructure costs.

Vibe coding lets fintech teams build tools with natural language prompts instead of code. Learn how mock data, compliance guardrails, and AI-powered workflows are changing how financial apps are developed-without sacrificing security or regulation.

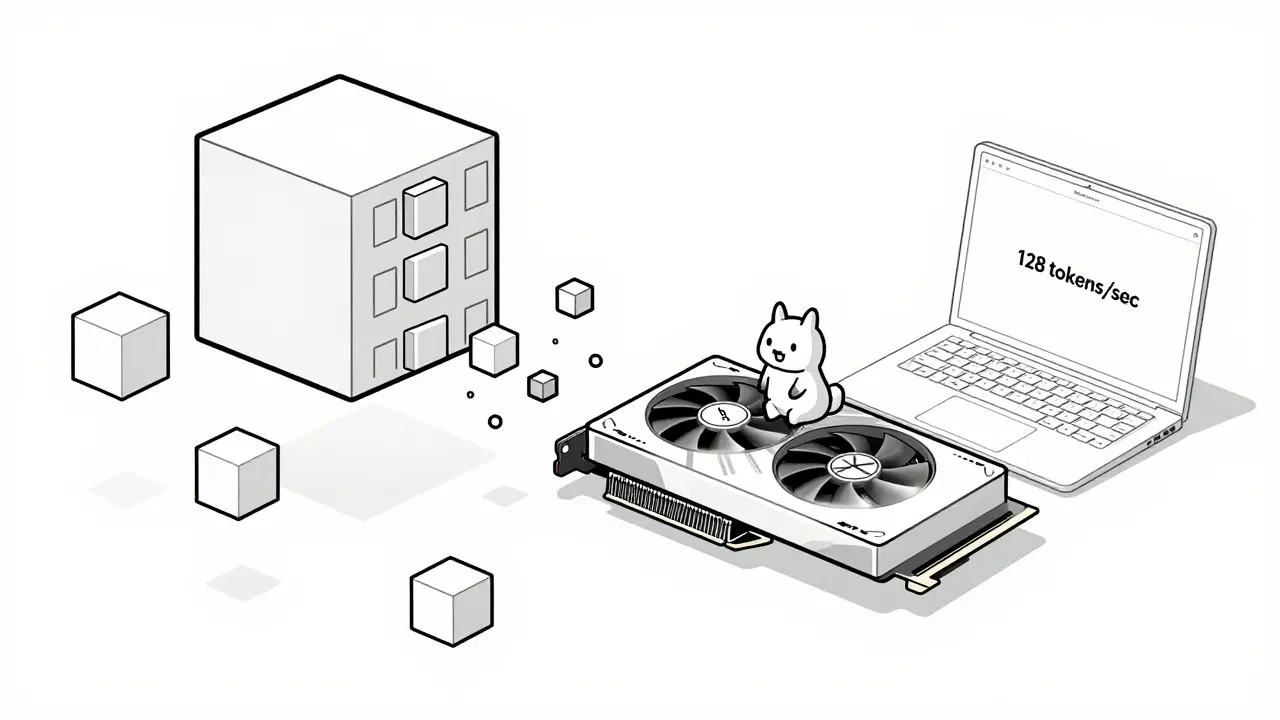

Learn how hardware-friendly LLM compression lets you run powerful AI models on consumer GPUs and CPUs. Discover quantization, sparsity, and real-world performance gains without needing a data center.

NLP pipelines and end-to-end LLMs aren't competitors-they're complementary. Learn when to use each for speed, cost, accuracy, and compliance in real-world AI systems.

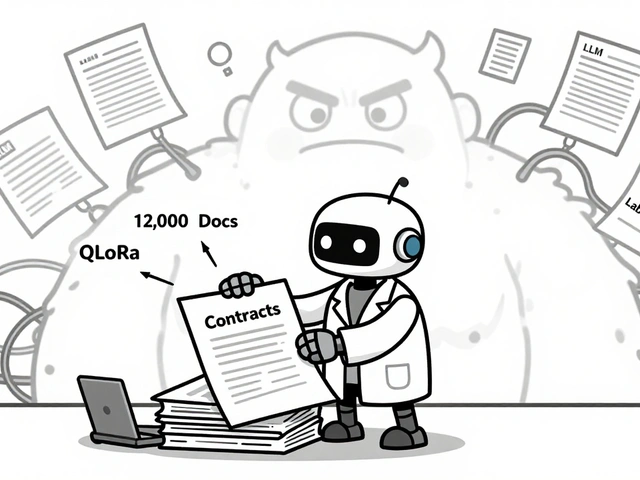

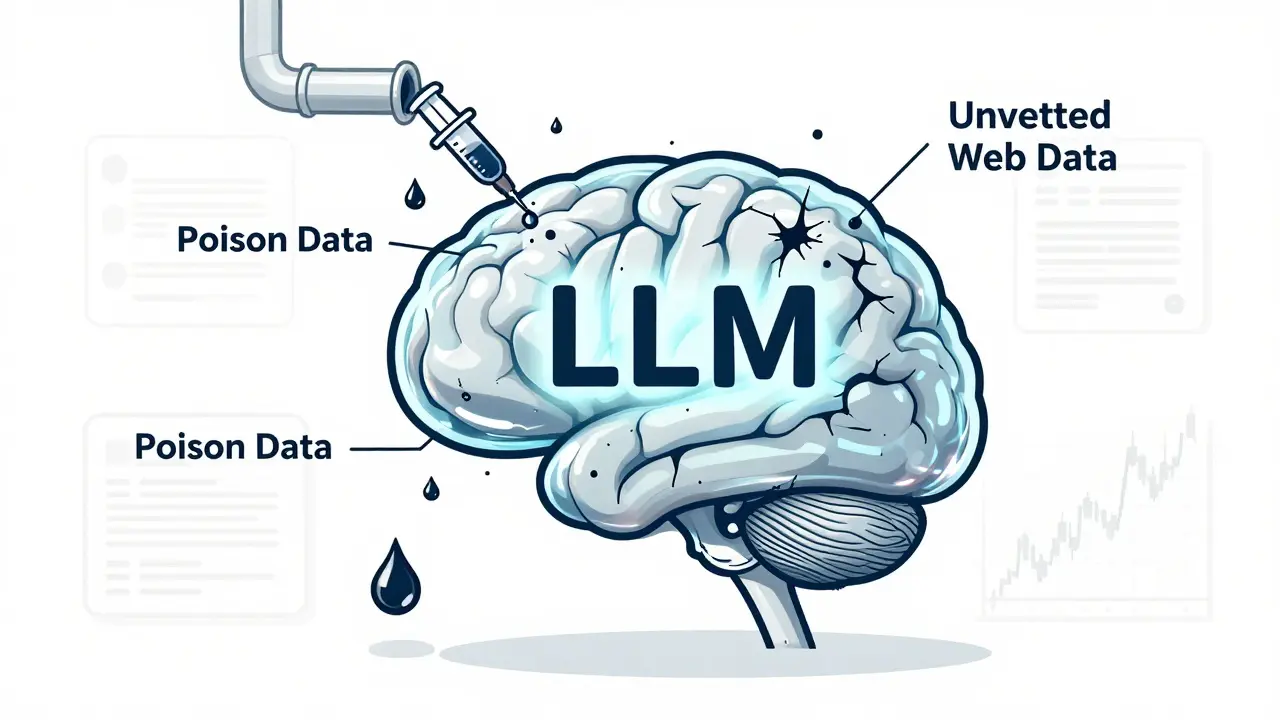

Training data poisoning lets attackers corrupt AI models with tiny amounts of fake data, leading to hidden backdoors and dangerous outputs. Learn how it works, real-world cases, and proven defenses to protect your LLMs.

Error-forward debugging lets you feed stack traces directly to LLMs to get instant, accurate fixes for code errors. Learn how it works, why it's faster than traditional methods, and how to use it safely today.

Artificial Intelligence

Artificial Intelligence