Category: Artificial Intelligence - Page 4

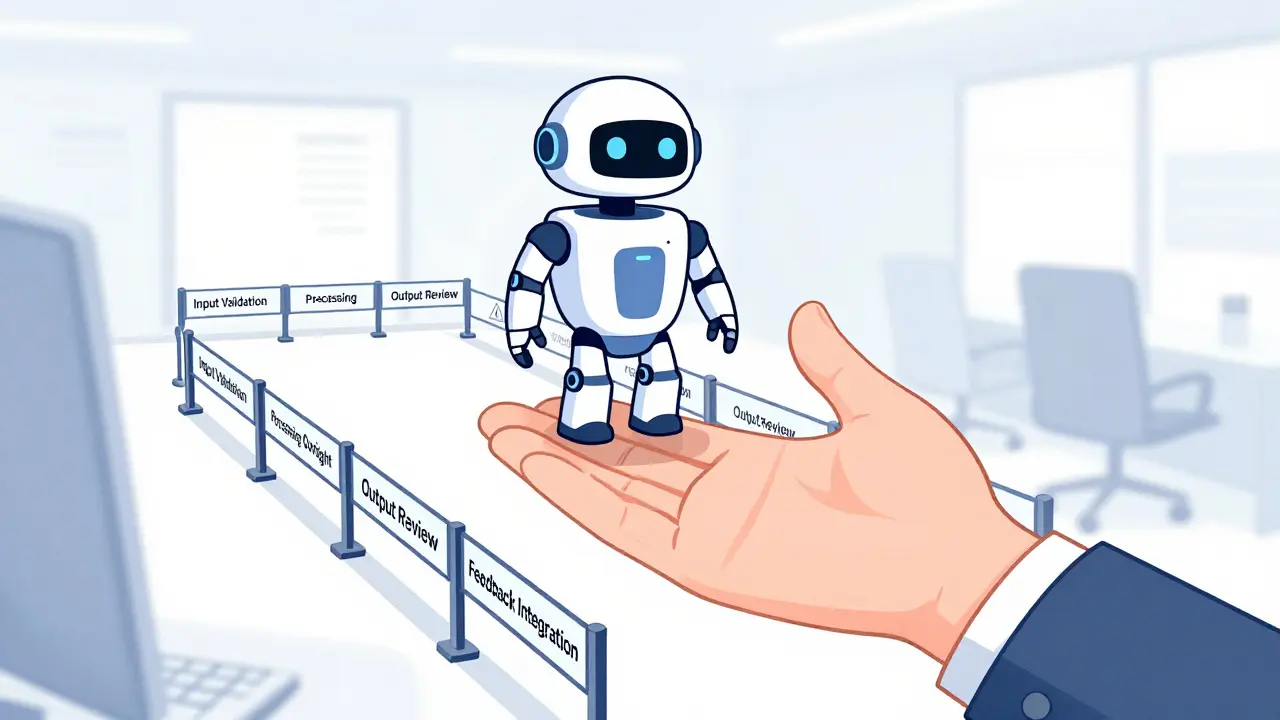

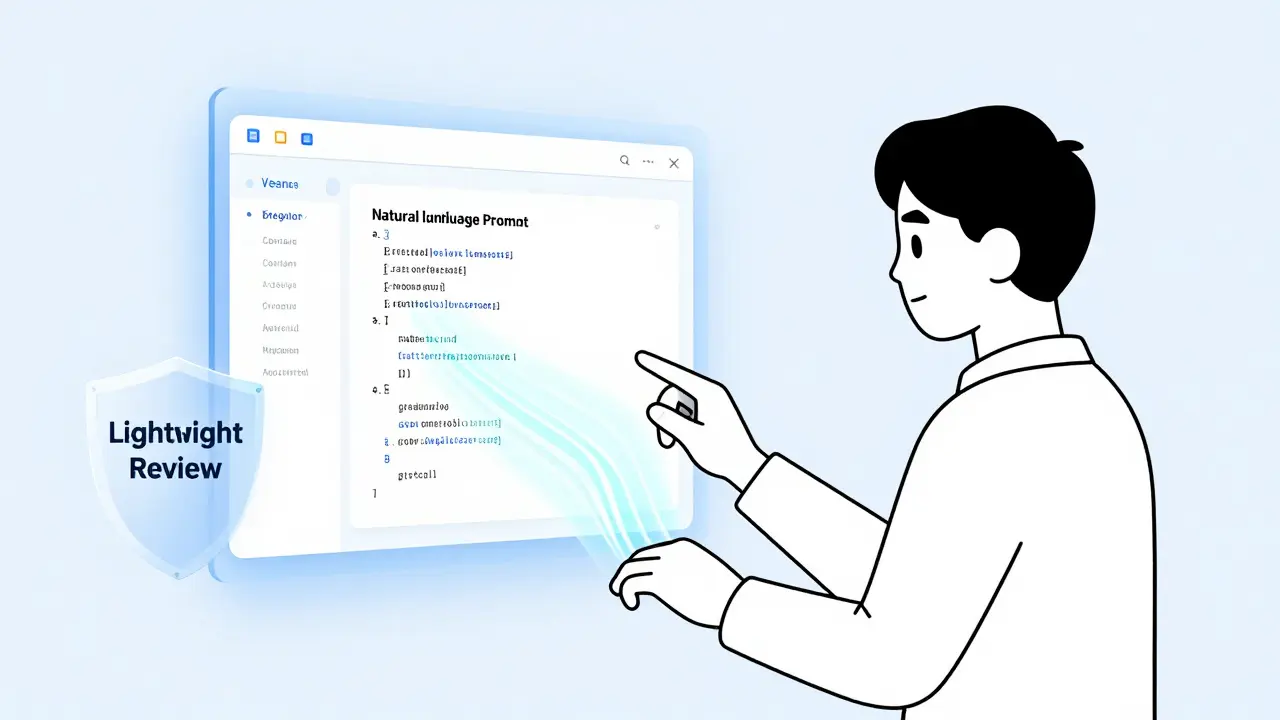

Implementing human-in-the-loop systems ensures safe generative AI deployment. Learn how to set approval workflows, manage exceptions, and balance automation with quality control using proven strategies.

Explore the conflicting data on vibe coding adoption in 2026. Learn what questions to ask in developer sentiment surveys to uncover real productivity gains, security risks, and trust levels.

Human oversight in generative AI isn't about slowing things down-it's about preventing costly mistakes. Learn how structured review workflows and risk-based escalation policies keep AI accurate, ethical, and accountable.

Generative AI ethics require more than rules - they demand transparency, stakeholder involvement, and real accountability. Learn how universities, researchers, and institutions are building ethical frameworks that actually work in 2026.

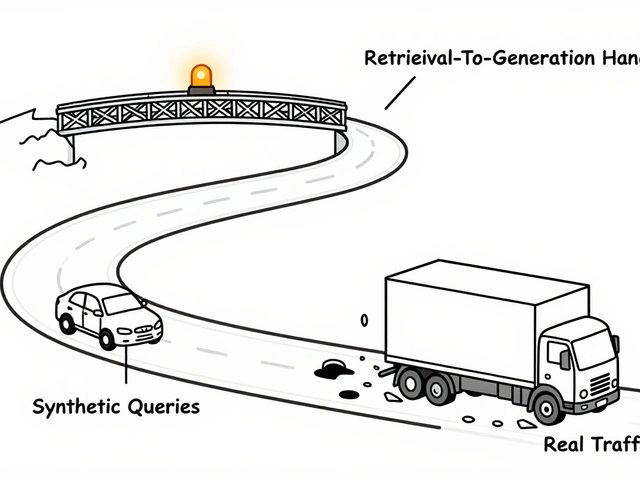

Tiered governance for vibe-coded apps matches control intensity to risk, letting teams build fast without sacrificing safety. It replaces rigid policies with smart, automated checks that scale with impact.

Vibe coding tools today generate code fast but fail at system design, governance, and testing. The next wave must fix these gaps-or stay stuck as glorified snippet generators.

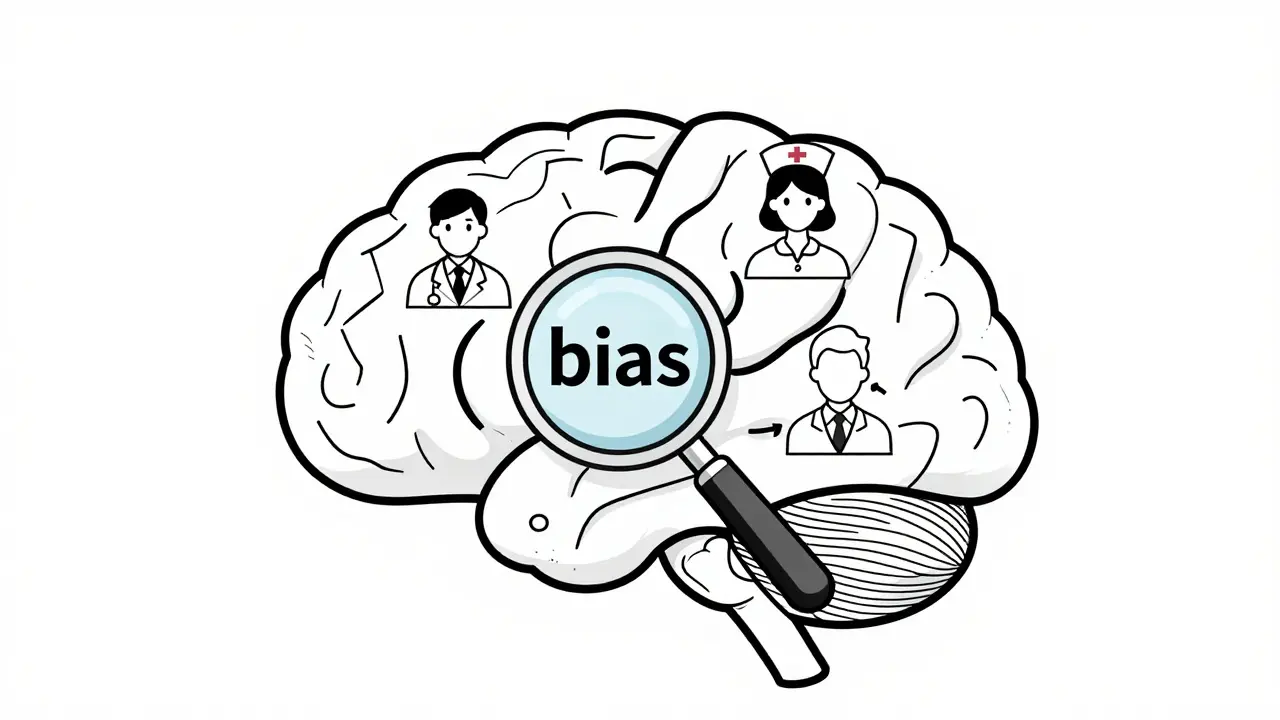

Large language models exhibit hidden biases from training data, human feedback, and internal architecture. New research reveals pro-AI bias, AI-AI bias, and methods to detect and fix them before they cause real harm.

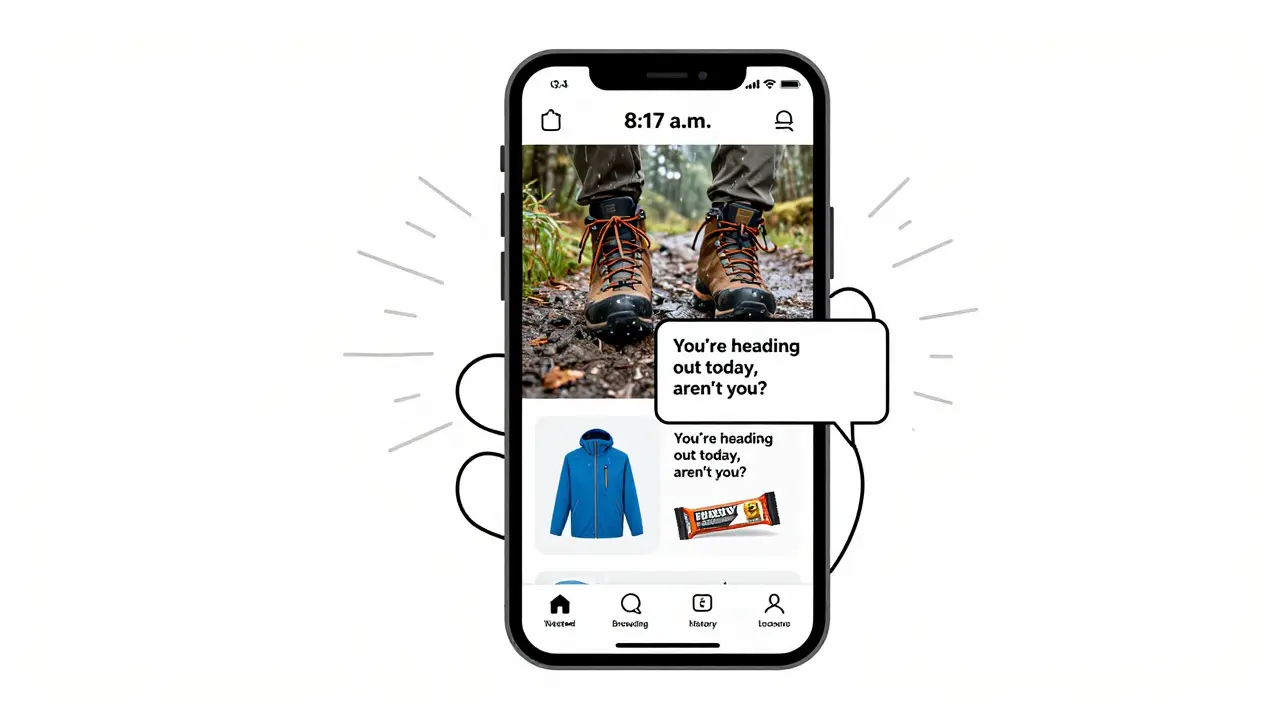

Generative AI now personalizes customer journeys in real time, using over 500 data points to deliver tailored content that boosts satisfaction and revenue. Learn how it works, what it delivers, and how to avoid common pitfalls.

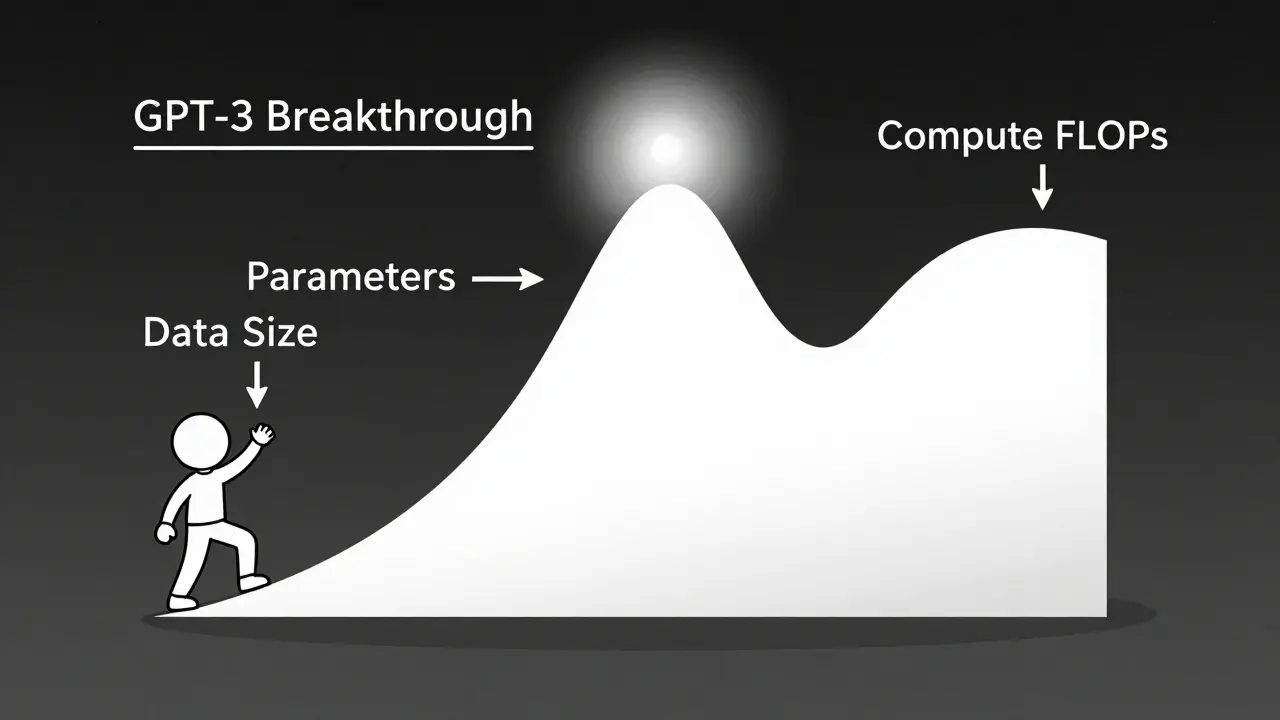

Scaling laws let you predict exactly how much performance improves when you increase model size, data, or compute. Learn how math, not just bigger models, drives AI breakthroughs-and why efficiency now beats raw scale.

Large Language Models are transforming contact centers by understanding customer sentiment and intent with unprecedented accuracy. From auto-generating summaries to predicting churn, LLMs turn raw calls into actionable insights that improve both customer experience and agent efficiency.

Stop sequences let you control how long AI-generated text gets, prevent hallucinations, cut costs, and ensure clean outputs. They're not optional - they're essential for any real-world LLM application.

AI-generated UIs can speed up design, but without a design system, they create inconsistency. Learn how design tokens, governance, and human oversight keep components uniform across AI tools in 2026.

Categories

Archives

Recent-posts

How Generative AI Is Transforming Prior Authorization Letters and Clinical Summaries in Healthcare Admin

Dec, 15 2025

Artificial Intelligence

Artificial Intelligence